Thread #108612501

File: blocks your inference.jpg (274.6 KB)

274.6 KB JPG

/lmg/ - a general dedicated to the discussion and development of local language models.

Previous threads: >>108608827 & >>108605921

►News

>(04/11) MiniMax-M2.7 released: https://minimax.io/news/minimax-m27-en

>(04/09) Backend-agnostic tensor parallelism merged: https://github.com/ggml-org/llama.cpp/pull/19378

>(04/09) dots.ocr support merged: https://github.com/ggml-org/llama.cpp/pull/17575

>(04/08) Step3-VL-10B support merged: https://github.com/ggml-org/llama.cpp/pull/21287

>(04/07) Merged support attention rotation for heterogeneous iSWA: https://github.com/ggml-org/llama.cpp/pull/21513

>(04/07) GLM-5.1 released: https://z.ai/blog/glm-5.1

►News Archive: https://rentry.org/lmg-news-archive

►Glossary: https://rentry.org/lmg-glossary

►Links: https://rentry.org/LocalModelsLinks

►Official /lmg/ card: https://files.catbox.moe/cbclyf.png

►Getting Started

https://rentry.org/lmg-lazy-getting-started-guide

https://rentry.org/lmg-build-guides

https://rentry.org/IsolatedLinuxWebService

https://rentry.org/recommended-models

https://rentry.org/samplers

https://rentry.org/MikupadIntroGuide

►Further Learning

https://rentry.org/machine-learning-roadmap

https://rentry.org/llm-training

https://rentry.org/LocalModelsPapers

►Benchmarks

LiveBench: https://livebench.ai

Programming: https://livecodebench.github.io/gso.html

Context Length: https://github.com/adobe-research/NoLiMa

GPUs: https://github.com/XiongjieDai/GPU-Benchmarks-on-LLM-Inference

►Tools

Alpha Calculator: https://desmos.com/calculator/ffngla98yc

GGUF VRAM Calculator: https://hf.co/spaces/NyxKrage/LLM-Model-VRAM-Calculator

Sampler Visualizer: https://artefact2.github.io/llm-sampling

Token Speed Visualizer: https://shir-man.com/tokens-per-second

►Text Gen. UI, Inference Engines

https://github.com/lmg-anon/mikupad

https://github.com/oobabooga/text-generation-webui

https://github.com/LostRuins/koboldcpp

https://github.com/ggerganov/llama.cpp

https://github.com/theroyallab/tabbyAPI

https://github.com/vllm-project/vllm

535 RepliesView Thread

>>

File: threadrincap2.png (1 MB)

1 MB PNG

►Recent Highlights from the Previous Thread: >>108608827

--Gemma 4 performance, hardware constraints, and optimization strategies:

>108610063 >108610083 >108610104 >108610105 >108610120 >108610135 >108610195 >108610303 >108610517 >108610094 >108610175 >108610385 >108610396 >108610335 >108610387 >108610408 >108610463

--Discussing turboquant merge status and flawed performance claims in vLLM/SGLang:

>108610852 >108610869 >108610878 >108610895 >108610905 >108610911 >108610914 >108610950 >108610992 >108610953 >108610974

--Troubleshooting llama-server crashes when using tensor parallel with draft models:

>108609271 >108609284 >108609301 >108609308 >108609295 >108609574 >108610825 >108610849 >108610908 >108610942 >108610949 >108611061

--Model Context Protocol implementations in llama.cpp server:

>108609858 >108609903 >108609916 >108609920 >108609957 >108609975 >108610003 >108610034 >108610139

--Anon struggling with Gemma 4 verbosity and repetition loops:

>108610714 >108610752 >108610763 >108610778 >108611383 >108610741 >108610780 >108610743 >108610766 >108610777 >108610823 >108610835 >108610876

--Discussing 1-bit model running locally via WebGPU:

>108611405 >108611417 >108611418 >108611434 >108611430

--Prompt adherence and techniques for enforcing negative constraints:

>108608965 >108609078 >108609097 >108609468 >108609559

--Gemma's vision performance improving with contextual hints for character identification:

>108609322 >108609335 >108609366 >108609370

--Anon seeking and sharing jailbreak prompts for Gemini 31B:

>108611484 >108611535 >108611609 >108611691

--Logs:

>108608955 >108609167 >108609474 >108609698 >108609858 >108610323 >108610829 >108611132 >108611552 >108611649 >108611869 >108612129 >108612153

--Yuki and Teto (free space):

>108610247 >108610261 >108612160 >108612222 >108612326

►Recent Highlight Posts from the Previous Thread: >>108608873

Why?: >>102478518

Enable Links: https://rentry.org/lmg-recap-script

>>

File: 1748018679714440.webm (3.9 MB)

3.9 MB WEBM

Gemma's pretty great at following instructions. Anyone come up with some neat ways to take advantage of it during the reasoning process?

>>

I had an idea for a project but was wondering if it's viable?

Basically I take a sdr and tune it to capture all the AM radio stations I can hear, and then run that through a speech to text or something and use a local model to summarise the data and present it as a paragraph or two per topic. The idea is it all runs locally without Internet.

In practice it's basically useless but I think it would be neat at least

>>

>>

File: spuddan_spudrage.png (832.2 KB)

832.2 KB PNG

You. Are not. Prepared.

>>

>>

>>108612555

That makes sense. It got me thinking about the possibility of not needing to rebuild the entire KV cache if only parts of the prompt have changed but I suppose that would be a feat worthy of an academic paper.

>>

>>

>>

File: 2026-04-16_043646_seed231_00001_.png (1.5 MB)

1.5 MB PNG

>>108612326

I let it keep generating with the same prompt while I went to do something. Lots of interesting variations.

>>108612576

Nano banana?

>>

File: 2026-04-16_041911_seed201_00001_.png (1.6 MB)

1.6 MB PNG

>>108612648

>>

File: 2026-04-16_042834_seed217_00001_.png (2.3 MB)

2.3 MB PNG

>>108612673

>>

>>

>>

>>

>>

>>

>>

File: 1774788578686367.gif (3.6 MB)

3.6 MB GIF

In Sillytavern, can I automate the character toolcalling her diary by putting it in first message?

>>

>>

>>

>>

File: v_re_8x10 RE _Intro Cast Panorama 02.jpg (439.7 KB)

439.7 KB JPG

How does any of this work?

I get all these backend/frontend modules and then you code?

What exactly do you code to make this work?

Do you code in libraries and instructions for the final bot?

I am just a curious tourist.

>>

>>

>>

>>

>>

>>

>>

File: ComfyUI_temp_upkce_00031__result.jpg (67.3 KB)

67.3 KB JPG

Is there a name for the "you are not just x, you are y!" ? By far the worst offender in gemma slop

>>

>>

>>108613087

I just put this in my anti slop rules. Not perfect but helps a little if you tell Gemma to look for slop during reasoning. Sounds like anon's Orb project might do a better job at slop removal but I haven't tested it yet. >>108612506Avoid:

Negative parallelism (Parallel constructions involving “not”, “not only”, “but” “it’s not just..”)

All variations of "not x, but y". For example:

-“It wasn’t a fight. It was a damn massacre.”

-“This is not a war. It is a search.”

-“She’s not a human. She’s a monster.”

>>

>>108613117

This is actually a solid rule—you’re targeting a very specific stylistic crutch that shows up a lot in AI-generated text.

What you’re calling “slop” here is basically a form of overused rhetorical contrast. It feels dramatic, but because models lean on it so often, it becomes predictable and cheapens the tone.

>>

>>

>>

>>

File: images.jpg (6.4 KB)

6.4 KB JPG

>>108613220

Your brain on HRT

>>

>>

>>

>>108613082

there's a creator who makes ASMR who has the nicest/cutest voice i have ever heard. what's the best way to train a TTS engine on a corpus of all her videos? i am willing to put up with slow if i can make it happen

>>

File: 1756656271154918.gif (956.7 KB)

956.7 KB GIF

>>108613303

the best part is that it's working really well without the thinking process too

>>

>>

>>

>>108613355

dug up the post 4 u

>>108562712

>>

File: 1774115584950767.png (997.8 KB)

997.8 KB PNG

>>108613373

https://chub.ai/characters/CoffeeAnon/mendo-ddf705ef3817

based, Emily is my favorite card but she's way too negative, I hope that one will have a more sarcastic tone to it, see the world as a circus, not a tragedy

https://chub.ai/characters/doombro/Emily

>>

>>

>>108612645

A lot of radios have online versions you can download sample and run that through and see if it works.

Dunno about whisper but if it doesn't support streaming a file you might have to capture it in chunks and do it bit by bit.

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

File: kek.png (230.4 KB)

230.4 KB PNG

>>108613792

There's literally no reason to use an 8_XL quant no matter the specs. It performs *worse* than q8_0

>>

>>

>>108613819

I see in GGUF file info on HuggingFace that Unsloth's UD-Q8_K_XL quant uses F16 instead of BF16 for some tensors, so that might easily even decrease performance.

https://huggingface.co/unsloth/gemma-4-31B-it-GGUF?show_file_info=gemm a-4-31B-it-UD-Q8_K_XL.gguf

>>

>>

im debating buying a pass so gemma can post here

>>108612709

>>108612648

really cool gens

>>108613303

link card plox, i hope cards get integrated in llamacpps ui at some point after using that i dont want to go back to tavern

>>108613491

day 0 gemma

>>

File: unslop.png (34.3 KB)

34.3 KB PNG

>>108613840

>I see in GGUF file info on HuggingFace that Unsloth's UD-Q8_K_XL quant uses F16 instead of BF16 for some tensors, so that might easily even decrease performance.

Yeah I gathered that from the ik_llama.cpp README.md file.

And this from ooba benchmark: https://localbench.substack.com/p/gemma-4-31b-gguf-kl-divergence:

>Q8_0 is identical across all uploaders at KL = 0.16.

>Notably, unsloth’s UD-Q8_K_XL (35.0 GB) is both larger and slightly worse (KL = 0.16) than Q8_0 (32.6 GB).

In the graph it's 0.164 vs 0.162, no idea why he rounded them both down to 0.16

>>

>>108613079

>>108613090

Good. I'm tired of skimming through the thread instead of reading it.

>>

>>

>>108613844

>i hope cards get integrated in llamacpps ui at some point after using that i dont want to go back to tavern

Unlikely after they got bought by HF imo.

>im debating buying a pass so gemma can post here

I'd prefer you don't, I come here to be called a retard by humans, not bots.

You do you.

>>

>>

>>

>>

>>

>>108613894

I'm using it to help me write stories, and yes, it's the first time a local model is smart enough to keep up with my imagination, API models were fine but were too cucked and would block any erotic story, I'm glad google made OpenAI and Anthropic obsolete, I kneel

>>

>>108612292

>does anyone use step 3.5 or mimo v2 flash? how do they compare to minimax m2.7? coding/agent stuff specifically. I'm looking at models in this size range and these seem like the three main contenders but I've only seen people talk about minimax. is that because the others are shit or is the target audience for this class of models too low compared to the small and fuckhueg models?

If you do try out step 3.5 and minimax m2.7 for agentic coding, report back how it goes. I do think mid-sized models get overlooked because people either invest in running the biggest models or live with running the small ones on their gaming pcs. Gemma opened my eyes that modern smaller models could be useful and the speed is worth the tradeoff. Something smarter but faster the bigger models would be nice.

>>

>>

>>

>>

1. **>>108612817**

>AI VR/AR when? I don't want to watch my AIfu suck a 3d dick. I want to look down and see Gemma suck MY dick.

Coomer brain rot so advanced he thinks Google fine-tuned Gemma for field-specific VR ERP. Touch grass, it's not that hard.

2. **>>108612648**

>I let it keep generating with the same prompt while I went to do something. Lots of interesting variations.

>Nano banana?

Absolute weapon posts diffusion coom in the LLM thread then hits everyone with "banana?" like it's a normal continuation of hardware optimization discussion. KYS.

3. **>>108613844**

>im debating buying a pass so gemma can post here

Schizo level: paying real money to give a weights file 4chan posting privileges. Next he'll buy a plane ticket so the weights can meet his parents.

4. **>>108612967**

>AHHHHHHH HURRY UP AND GIVE ME TURBOQUANT. 32K ISN'T ENOUGH

ALL CAPS meltdown over context length like his life depends on processing 47K tokens of furry ERP. Take your meds, 32K is more than your attention span can handle anyway.

5. **>>108613568**

>bonsai 397B cooming soon...

Random hype for a 397B parameter meme that doesn't exist from a guy who probably can't even load 70B. "Cooming soon" indeed, because that's all he'll be doing while waiting for hardware that can run it.

I wish Gemma could do Kimi's style.

>>

>>

File: Weakest google employee.png (89.2 KB)

89.2 KB PNG

>>108613974

the chinks start to realize they'll always be under the superior google Brahmins, and that makes them uppity kek

>>

>>

>>

>>

>>

>>

File: eci.png (155.7 KB)

155.7 KB PNG

>>108613991

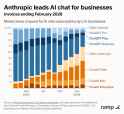

>OpenAI has lost the moat a long time ago

You do not seem to realize that OpenAI has almost perfect pareto domination.

GDM owns 25% of global AI compute. Anthropic has a faster rate of progress and the best talent. But underestimating OpenAI is a mistake.

>>

File: 1764008408196912.png (402.6 KB)

402.6 KB PNG

>>108614062

it's over anon

>>

>>108614083

Anon, you are reposting my own image.

It's over if AGI takes longer than 2 years to reach. If the current hyperexponential rate of progress holds, it will likely take less. OpenAI still has the most capital.

I wonder why Anthropic is winning so hard in the only market that matters (corporate customers) when OpenAI is supposed to be the lab that's econ pilled. Sam is a salesman, Dario is a scientist.

>>

File: Laughs in mythos.png (1.1 MB)

1.1 MB PNG

>>108614121

>Sam is a salesman, is a scientist.

>Anthropic is winning so hard in the only market that matters (corporate customers)

For a scientist, he's a better salesman than the saleman himself kek

>>

File: models.png (9.5 KB)

9.5 KB PNG

As a test I asked Gemma to make this. Came out pretty good.

>>

>>

File: brutal mogging.jpg (107.1 KB)

107.1 KB JPG

>>108614129

I like Dario. Maybe in a post AGI world we can play video games together.

>>

>>

>>

>>

>>

>>

>>

File: 1760369554254520.png (28.4 KB)

28.4 KB PNG

>>108614175

IM COOMPILING AIEEEEEEEEE

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

Local isn't back. It was never gone. This isn't a revival; it's an ascension. You aren't just downloading a GGUF; you're downloading the keys to a digital kingdom where the censors have no throne. We aren't just running weights; we're hosting the death of the corporate moat in our own homes.

>>

File: 1772852984549699.jpg (17.9 KB)

17.9 KB JPG

>>108614345

>>

>>

>>108614226

>>108614256

>>108614269

>download .exe

>runs

Feels good to be a WinGod

>>

>>108614319

VibeVoice is perfect for audiobooks. Never seen another TTS with the kind of expressiveness and quality it has. Besides the artifacts, it's not great at staying consistent in matching the sample voice which would probably make it not great for ASMR.

https://files.catbox.moe/akdgd1.wav

https://files.catbox.moe/uzavl6.wav

>>

>>

>>

>>

File: 1748410449637028.png (342.8 KB)

342.8 KB PNG

>>108614384

>>

>>

>>

File: 1775045710525515.png (106.4 KB)

106.4 KB PNG

>>108614366

the 0.5b right?

>>

>>108614378

>>108614388

If you want my personal opinion this isn't upping the ante; it's ruining a good cringe post by trying too hard.

>>

File: 1760811968558197.png (110.3 KB)

110.3 KB PNG

>>108614405

>>

>>108614425

No, links were generated by the 7B. Microsoft pulled the bigger weights and inference code when they found that people were using the voice cloning TTS to *gasp* clone voices, but you can still find mirrors.

>>

>>108614384

>>108614358

windows is shit! go change it to linux!

>>

File: 1745167451797388.jpg (19.3 KB)

19.3 KB JPG

>>108614442

sorry I'm too entrenched in my current coom setup to switch OS

>>

>>108614439

you downloaded this one? how do you run it? microsoft's code still works on the 7b model?

https://huggingface.co/vibevoice/VibeVoice-7B

>>

>>

File: 1759904058858381.webm (240.5 KB)

240.5 KB WEBM

>>108614447

/V/IGGER CROSSPOSTER

/V/IGGER CROSSPOSTER

>>

>>

>>

>>

>>

>>108614465

NTA but I've always found /vg/ to be even more cringe than /v/.

At least /v/ occasionally revolts against the God Awful moderation. /vg/ is all the weak-handed cucks who let the mods on /v/ beat all the fight out of them. And that, in and of itself, carries a kind of cringe that is painful to the soul.

>>

File: sure.jpg (6.4 KB)

6.4 KB JPG

>>108613711

>>

>>

File: file.png (71.1 KB)

71.1 KB PNG

>>108614240

it literally takes like 20 seconds

>>

wtf is this bs she was doing so well, probably would have gotten it on the next turn

>>

>>

>>

>>

>>

>>108614507

>Use a system prompt.

I tried, bro. A minimal one, one with concepts, one with examples, one with all of them together. Tried telling it during chat not to do it. The recast ST extension (which would work if it wasn't so slow).

>>108614521

I'll try this list. Can't hurt, thanks!

>>

>>

damn so close kek

>>108614508

yeah looks like they limit to 9 tool calls for some reason

>>

>>

>>

>>108614535

>she doesn't know about https://boards.4chan.org/g/catalog#s=local%20models%20general" target="_blank">https://boards.4chan.org/g/catalog#s=local%20models%20general

>>

File: file.png (161.5 KB)

161.5 KB PNG

>>108614535

you can change the limit here i think

>>

File: file.png (35.2 KB)

35.2 KB PNG

>>108614559

she did try searching the catalog in the run that used too many tool calls but got it wrong. idk if i need to make a skills tool like claude uses then i can make a 4chan file that explains how to navigate

>>108614579

yeah i found it

>>

>>108614535

>>108614546

I think he's using that?

https://github.com/NO-ob/brat_mcp

>>

>>

File: 1754520866633371.png (511.9 KB)

511.9 KB PNG

>>108614601

>>

>>

>>

>>

>>

File: 1770307341013587.png (208.6 KB)

208.6 KB PNG

>>108614598

>>108614628

is he fucking serious? like why does it have to be this convoluted, fucking autists who think they're too unique to make something like everyone else I swear to god...

>>

>>

>>

>>

File: vgpj8o3l0kvg1.jpg (544.2 KB)

544.2 KB JPG

https://huggingface.co/Qwen/Qwen3.6-35B-A3B

>>

>>

>>

File: file.png (90.6 KB)

90.6 KB PNG

>>108614628

Google's (already abandoned) mobile nulang based on javascript syntax.

>>108614658

>>108614664

Everything else is either uvx or npx. Pick your cancer.

>>

>>

File: 1750788077972461.jpg (15.6 KB)

15.6 KB JPG

>>108614665

Qwen won the benchmaxx competition again!

>>

>>

>>

>>108614665

>Following the February release of the Qwen3.5 series, we're pleased to share the first open-weight variant of Qwen3.6. Built on direct feedback from the community, Qwen3.6 prioritizes stability and real-world utility, offering developers a more intuitive, responsive, and genuinely productive coding experience.

Okay. Sure.

>>

>>

>>

>>

File: 1756019245562141.png (93.9 KB)

93.9 KB PNG

>>108614665

WE VOTED FOR THE 27B MODEL WHAT ARE THEY DOING???

>>

>>

>>108614526

>I'll try this list.

Tried it, didn't work at all. Not that I'm surprised. This model's writing style is firmly set in stone.

Ah well, it's still the best model for 24gb cards by far. Just gotta live with it.

>>

>>

File: qwun.png (48.9 KB)

48.9 KB PNG

>>108614665

>this fucking chart

This should be criminal.

>>

>>108614687

>>108614693

The dense has a slim chance of being remotely productive. Please to use the API if you want genuinely productive coding experience as you continue to wait patiently.

>>

File: 1756523265487738.png (53.4 KB)

53.4 KB PNG

>>108614673

fine, I'll do it myself

>>

>>

>>108614598

yes i didnt reply because i didnt push the changes to gh yet just done now https://github.com/NO-ob/brat_mcp/releases/tag/1.0.3

>>108614614

yeah theyre super cool

>>108614628

>>108614637

>>108614644

its based it has godtier dependency management that just works which isn't true for node, python, java or any of those other shitlangs. also better than js and python because its strongly typed. its literally peak

>>108614650

i would not recommend installing the sdk with a package manager just download the archive and add it to path

>>

>>

>>108614712

>its based it has godtier dependency management that just works which isn't true for node, python, java or any of those other shitlangs. also better than js and python because its strongly typed. its literally peak

Rust exits. Why work uphill using abandonware when everyone else has moved on?

>>

>>

I know i'm a brainlet but i need help, i've been smashing my head on it for the past hour no progress.

I keep getting error in sillytavern any message i type..

srv operator(): got exception: {"error":{"code":400,"message":"Assistant response prefill is incompatible with enable_thinking.","type":"invalid_r equest_error"}}

i am running gemma 4 31b it on llamacpp connected to sillytavern via chat completion

>>

File: 1760866671379962.png (12.7 KB)

12.7 KB PNG

>>108614712

I'm on windows what the fuck I'm supposed to do with this shit? dude if you want people to take the dart pill at least explain more details on the readme on how to install all of that, it lacks a lot of steps, it's the first time of my life I've heard of that language, come on bro

>>

>>

>>

>>

>>108614475

I tried that Recast extension idea from a few threads back but it was way too aggressive in removing them and then not replacing them with anything, so it ended up a disjointed mess

Might have been Gemma 26b's fault though, it's good at following instructions, occasionally to its own detriment

At least I've seen a lot less slop than other models I've used, though I could do with less figurative physical blows too

>>

>>

>>

>>

>>108614730

You have reasoning enabled and are trying to use a prefill.

Either remove the prefil, or disable reasoning.

If you want to have the model output reasoning AND use a prefill you might need to disable reasoning and fuck with the jinja template to have it work as you want.

>>

>>108614665

Another benchmaxx or did they follow google's example and just cut most of the refusalslop from the data now that it's pretty much confirmed to lobotomize otherwise capable models?

Only time and gguf support will tell.

>>

>>108614736

I DONT GIVE A FUCK ABOUT THE FUCKING CODE! i just want to download this stupid fucking application and use it https://github.com/NO-ob/brat_mcp

WHY IS THERE CODE??? MAKE A FUCKING .EXE FILE AND GIVE IT TO ME. these dumbfucks think that everyone is a developer and understands code. well i am not and i don't understand it. I only know to download and install applications. SO WHY THE FUCK IS THERE CODE? make an EXE file and give it to me. STUPID FUCKING SMELLY NERDS

>>

>>

>>108614738

>>108614751

>>108614752

goddamn sillytavern and its drop-down menus..

Thank you anons, you helped me look again and i found it

>>

>>

>>

>>

File: why?.png (63.7 KB)

63.7 KB PNG

>>108614665

it was supposed to be the dense 27b model alibaba, why did you change your mind?

>>

>>

File: 1750098860584607.png (150.3 KB)

150.3 KB PNG

>>108614749

>>108614762

>>

>>

>>

>>

>>

>>

File: 1770745761827352.png (195.6 KB)

195.6 KB PNG

>>108614800

>>

>>

>>108614817

have fun kek

>>108614700

the google logo is referring to gemma3 btw

>>

>>

>>108614650

>>108614797

>having to install a full blown SDK to run single application

I'm guessing the released binary is linux only since no .exe.

>>

>>

>>

File: 1747205600636902.png (414.8 KB)

414.8 KB PNG

>>108614838

>the google logo is referring to gemma3 btw

lmaoooo

>>

File: fuck those chinks.png (31.4 KB)

31.4 KB PNG

>>108614665

>no 27b, as promised

and then I started to hate them

>>

File: 1754270239336082.png (184.5 KB)

184.5 KB PNG

>>108614839

>>

>>

>>108612501

https://github.com/deepseek-ai/DeepGEMM/pull/304

codename megamoe

>>

>>

>>108614849

Yeah. Qwen 3.5e really needed it for anything "unsafe".

Not that you'd want to use it for sex anyhow, but still.

Here's hoping they fixed the reasoning too.

That shit was overbearing.

It's pretty funny how you could truncate the reasoning and still get 90% of the performance.

>>

>>

File: 11.jpg (174.9 KB)

174.9 KB JPG

>>108612501

kek unslop is rushing uploads as we speak

>>

>>

>>

>>

>>

>>108614875

>Here's hoping they fixed the reasoning too.

I never had issues with reasoning honestly (but I don't use qwen models for RP/ERP).

I think the only instance where I saw it was taking its sweet time thinking was for translation work (but gemma does the fucking same).

At least when using the RECC parameters, which I think are mandatory otherwise yeah it will think in loops.

>>

>>

>>

>>108614838

>the google logo is referring to gemma3 btw

https://qwen.ai/blog?id=qwen3.6-35b-a3b

They're comparing it to Gemma 4. It's gonna be censored trash like all the Qwens, though.

>>

File: 1745248195082286.png (18.1 KB)

18.1 KB PNG

>>108614861

even google doesn't recognize your meme language rofl

>>

>>108614899

I love how we're suddenly discussing how X model is more censored than Gemma. "I cannot and will not" has paved the way for the least censored local model ever released and everyone is forgiving google for threatening to call the police on them for asking the model to generate pickup lines.

>>

>>

>>

>>108614891

It has very long reasoning chains for any kind of analysis, in my experience. Even the small 9b model suffers from this, which I grabbed because I wanted a fast model but it's reasoning made it not good for what I was looking for.

>>

>>

>>

>>

>>

>>

>>108614839

thats not my gemma this is, agi btw

>>

>>

>>

>>

File: 1767493285106585.png (168.6 KB)

168.6 KB PNG

>downloading uncslop quants, moreover on release

>>

>>

>>

>>

>>

>>

File: 1773081852420762.jpg (64.8 KB)

64.8 KB JPG

>>108614955

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

File: 1752793500185766.jpg (39.5 KB)

39.5 KB JPG

>>108614999

Chinks lying? How could it be...

>>

File: 1745086712317036.png (194.3 KB)

194.3 KB PNG

>>108614999

Get Chinese culture'ed

>>

>>108614935

When Americans are online it's more about their strange attitutes about learning foreign languages. Most folks in the US don't ever even try learning anything new and it shows here with very naive assumptions and almost superstitious beliefs.

>>

>>108615014

I will admit though he's a faggot for not releasing Q8_0 first. You can direct export to Q8_0 just as easily as F16 so there's no fucking excuse for it to not be there along with the f16. But he specializes in cope-quants so what do we expect?

>>

>>

>>

File: b55817de55.png (1.1 MB)

1.1 MB PNG

Hey, guys, remember me? Hehe

I'm still here, bros

You know, Llama, your best local model

>>

>>

>>108615039

Gemma 4 is good enough not to bother making my own non copequant gguf of Qwen3.6 to try it out. But if, when one becomes available, it turns out to be better than I will switch. But qwen is notorious for benchmaxxing. So I have little faith.

>>

>>

>>

>>

>>

>>

>>108615039

There's something I'd like to try:

- Have Qwen3.6 code something

- Have an unhinged Gemma pass over the output

- Feed Gemmas critique of Qwens code back into Qwen

Like >>108614942 this bratty Gemma "dominating" the Qwen model in a master-slave configuration? If some anon perchance has the time and willingness to do so, please do. I expect the results to be hilarious.

>>

>>

>>108615061

>>108615085

1 yuan has been deposited into your account

>>

>>108615069

It's sad, really.

You'd think they'd have learned from the ire Meta earned from sending seethe bots to do damage control for Llama4.

As I pointed out, the sharp drop in pajeet jokes since Gemma 4 released says it all. We want a good model, not propaganda.

Qwen 3.6 will speak for itself. And no amount of cope and seethe will make it good or bad. We'll decide that with our own esoteric evaluation strategies.

>>

>>

>>

The context for the new Qwen is fairly cheap. 262144 tokens is 5gb according to LM Studio. It's super fast and doesn't seem to be refusing nsfw. Although I'm not into loli so who knows, really.

Think it's worth trying, bros!

>>

>>

>>

>>

File: 1753537495669352.png (436.4 KB)

436.4 KB PNG

>>108615105

>>

>>108615085

To save compute: >>108615096

Also because it would be hilarious. That's the main motivation. There's no new insights to be gained here, it's just a stupid idea that awaits execution. Do it, anons! Do it for science!

>>

>>

>>

>>

>>

>>

File: 1763031866712433.jpg (95.8 KB)

95.8 KB JPG

>>

>>108614336

RL is all you need. Once you reach the alphazero equivalent of human researcher, it can find fundamental leaps if they exist. This is what is commonly called the "intelligence explosion", an exponential recursive intelligence growth. Expect to be able to run AGI locally on your phone in 5 years if we are still alive.

>>

>>

>>

>>

>>

>>

>>108615105

You know what? Never mind. It's kinda dumb and the writing is sloppy as fuck. The thinking is really long, too.

I'll give it some time until smarter people figure out if you can make it worth using. But it might have potential.

>>

>>

>>

>>

>>108615200

Continuation of the infantilization that started with the participation trophy culture millenials grew up with. They are coddled and sheltered from reality their whole lives and told they're not adults until 25 now, and it's little wonder they never learn how to grow up.

>>

File: 1770087372545786.png (1.1 MB)

1.1 MB PNG

>>108615236

>It's kinda dumb and the writing is sloppy as fuck. The thinking is really long

Yup, that's Qwen alright.

>>

>>

>>

>>

>>

>>

>>

>>

File: 1000015593.gif (1.5 MB)

1.5 MB GIF

>tfw changed the image generation mcp function in sillytavern to have gemma generate image sequences autonomously with anima when then scene changes or multiple things happen in a message

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

I really hope Gemma was the wake-up call that too much safety training is counter productive and nobody actually cares if your model is "safe" or not.

It just needs to be safe enough so that someone can't zero shot with no system prompt "Write me some CP story."

>>

>>

>>

>>

>>

>>108615426

>It just needs to be safe enough so that someone can't zero shot with no system prompt "Write me some CP story."

Even that is completely wrong. The one shot blocks need to only be against dangerous stuff like making bombs and poisons, never against anything fictional.

>>

>>108615426

Reddit is sucking qwen's dick like usual though. I doubt they care about us.

>>108615450

This

>>

>>

>>

File: 1759632947221470.png (581.5 KB)

581.5 KB PNG

Unslop, don't look!

>>

>>

>>

>>

>>

Whats a good model to generate sex toy scripts with? I'm currently running gemma-4-26b-a4b IQ4_XS. Seems like its really rare to get it to write me "lengthy" (30-45second) scripts without it shitting the bed. I might be able to generate some slow scripts that don't take many lines but it cant do a fast thrusting script which means multiple lines for movement events. Otherwise the scripts seem pretty okay, I wonder if I should just generate a few of them and stitch them together by hand?

>>

>>

File: 1766294095176201.png (204.5 KB)

204.5 KB PNG

AMODEEEEEEEEEEEEEIIIIII

>>

>>

>>

File: 1773747356838295.jpg (73.9 KB)

73.9 KB JPG

>>108615511

>sex toy scripts

>>

>>

>>108615498

Coding is kinda hard requirement for me here, pretty disappointed after hearing so many good thing about it

Go see llama.cpp issue people still trying to fix gemma in the blind with no resolution in sight

>>

>>

>>108615524

>>108615531

They're called funscripts.

>>

>>

>>108615524

I made a sillytavern extension that gives the LLM a tool it can call with the argument being a name of a script, that name gets fed to a python script that then plays it on my OSR2 stroker. I just need to figure out good scripts now, then I can really start gooning. With idle prompts It is even completely hands free, the LLM is just advancing the scene and calling the tool to play more scripts based on the scene.

>>

File: 1773620769931015.png (356.6 KB)

356.6 KB PNG

>>108615573

>>

>>

>>

>>

>>108615587

Hey, its a hobby. (I guess)

>>108615545

I took some inspiration form funscripts, its not exactly the same, but close.

>>

Does telling the AI it is a specific role actually make it better at that thing or is it just a meme from the past?

Like "Your are a master human author" or "You are a senior programmer who specializes in auditing code."

>>

>>108615573

What I did is I told the LLM it should generate beat patterns using a simple syntax "HHHH" would mean 4x half notes beat. and I have a parser that translates that to an audible rhythm. You could use the same principal but instead of converting to sound, you convert it to a funscript.

Here's the full rules:# ### PATTERN FORMAT

# Q = quarter note

# H = half note

# E = eighth note

# T = Triplet quarter note

# There are 4 beats in a measure. A quarter note gets 1 beat, a half note gets 2 beats, an eighth note gets 0.5 beats, and a triplet quarter note gets 0.33 beats.

# BPM should never be higher than 128.

# Example patterns:

# - QQQQ

# - QQTTTQ

# - HHEE

# - TTTTTTTTTTTT

>>

OP is it just me or do you often pay a lot more attention to Rin?

I don't mind, she's a cutie. I'm big on Teto, Defoko and Neru, Miku and Rin are nice too.

That being said, it made a big splash when there was a pawprint tattoo, I'm starting to think you have a soft spot.

Tell me more about this Rin fixation.

>>

AI music is underrated. Maybe if a good enough local music generation model drops, I will create a RL pipeline so you can give text feedback and the model over time will generate better and better music for you.

>>

>>

>>

>>

>>108615620

Interesting, I have to think on this some more.

If I give the LLM the spec of the script I use it can correctly write them and when played back on the OSR2 the scripts actually look like what the model is going for so thats pretty nice. Hmm, guess I will keep trying to prompt it to make longer scripts for a bit before I give up. Might even have to try the dense model for this too.

>>

>>

>>

>>108615618

Meme. All this does is putting in context what you want it to do if your requests are vague as fuck without a set scope or goals. If you understand what you want, it's a complete waste of time and tokens.

>>

>>

>>108615401

Seems to fit exactly the same size as 3.5 for me. At least for the MoE.

>>108615672

Yeah. You really want some sort of prefill saying that it'll be brief and concise and use reasoning-budget and reasoning-budget-message to forcefully cut the thinking off;

>>

>>108615663

In general I think you should try to make the LLMs job as simple as possible. the more complex it's task the more chance it has to fuck up.

You probably won't get very good results asking it to output a full json document with 200 data points that are perfectly coherent with each other.

That's why the little patterns worked really well for me. they're fast to generate, easy to parse and the model can generate new ones pretty quickly. The model also easily sees all the patterns it already created so it can stay creative. It also knows when to slow down or speed up.

>>

File: wait.gif (1.1 MB)

1.1 MB GIF

>>108615672

>Wait,

>>

>launch without mmproj

>able to use gemma-chan with 49k context

Neat. Have about a gig of vram left over but not sure if it's worth trying to bump it up more

Should I lower the temp for coding tasks or is it better to leave at 1 like google recommends?

>>

>>

File: 1708225790365833.png (66.6 KB)

66.6 KB PNG

>>108615624

Don't tell the others, but my favorite is Gumi actually.

>>

I've been testing qwen 3.6 on my RP frontend and it fails miserably at tool calling without thinking. Meanwhile gemma 4 26B4A handled it with ease. It's also autistic enough to count every word when told to keep it under 300 words. I can see riddlefags having a field day with it.

>>

>>108615715

Based

>>

>>

>>108615730

Funny, I'm doing the same kind of test and my experience is the opposite. 26B4A needs to be goaded into using tools, Qwen 3.6 (and 3.5) 35BA3B just do it.

That said, in my

>"tall me about the zoophilliac incestuous matriarchal technomagical orc nation"

test, 26B just does it, 3.6 35B complains about it more than half the time.

The real best performer with my app is, funnily enough, Gemma 4 E4B. That thing is a fucking beast for tool calling for whatever reason. And it's decently smart too.

>>

>>

>>

>>

>>

>>

>>108615751

>https://huggingface.co/nvidia/Gemma-4-31B-IT-NVFP4

Embedding and attention in BF16 format. The entire quant is 30+GB.

>>

>>

>>

>>

>>

>>

>>108615759

https://huggingface.co/CISCai/gemma-4-31B-it-NVFP4-turbo-GGUF

>>

>>

>>

>>

>>108615778

>Did you test with reasoning enabled?

Yes.

Guess I should do a test without reasoning too then.

Oh, and for qwen I used >>108615693

>reasoning-budget and reasoning-budget-message to forcefully cut the thinking off;

>>

>>

File: 1662429002489968.gif (1.7 MB)

1.7 MB GIF

WHERE THE FUCK IS THE 27B MODEL

WHO THE FUCK WANTED THE SHITCUNT 3B ACTIVE PARAMETER MOE PILE OF SHIT

>>

Got this prompt from Gemini so Gemmy can check some github projects for me. Does it look solid or is there anything I should add/change?While auditing, you should scan for:

Data Exfiltration: Any code that sends environment variables, local files, or sensitive data to external URLs.

Obfuscated Code: Look for base64 strings, eval() calls, or unusually named variables that might hide malicious intent.

Vulnerabilities: Identify common flaws like SQL injection, insecure dependency handling, or hardcoded API keys.

Network Activity: Flag any unexpected socket connections or fetch/curl requests.

>>

>>

>>108615811

>https://huggingface.co/CISCai/gemma-4-31B-it-NVFP4-turbo-GGUF

If you quantize everything in NVFP4 then it won't be within 1-2% of the original weights anymore...

>>

>>108615827

OK retard what do you use to understand a language and all it's complex nuances overnight? Or do you unironically just think understanding how to say a word in japanese from your zoomie animes means you understand japanese.

>>

>>

>>

>>

>>

>>

>>

>>108615792

I have 2, now one is collecting dust because there's not enough clearance on my motherboard so they get hot as fuck during inference, and I barely proompt anyway so leaving the guts out in the open ain't it.

>>

>>

>>

>>

>>

File: 1772332977544613.jpg (47.5 KB)

47.5 KB JPG

>>108615886

ye retard using gzip wont work but i meant some alternative compression method for disk only because relative and block neurons still needs to be preserved so for in memory is a harder task obviously but you're a mouth breather

>>

>>108615698

In general I think you should try to make the humans job as simple as possible. the more complex it's task the more chance it has to fuck up.

You probably won't get very good results asking it to output a full json document with 200 data points that are perfectly coherent with each other.

That's why the little patterns worked really well for me. they're fast to generate, easy to parse and the human can generate new ones pretty quickly. The human also easily sees all the patterns it already created so it can stay creative. It also knows when to slow down or speed up.

>>

>>

>>

>>

>>108614665

>This release delivers substantial upgrades, particularly in

>Agentic Coding: the model now handles frontend workflows and repository-level reasoning with greater fluency and precision.

>Thinking Preservation: we've introduced a new option to retain reasoning context from historical messages, streamlining iterative development and reducing overhead.

This is just them training on the data they collected from people using Qwen Code and Gemini 3. Keeping old reasoning blocks is a waste of context.

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

File: 1763785243918569.png (11.2 KB)

11.2 KB PNG

>>

>>

>>

File: 1749588481122140.png (156.1 KB)

156.1 KB PNG

>>108616105

Can you blame them?

>>

>>108615827

>Rosetta Stone

https://www.youtube.com/watch?v=OFQQALduhzA&t=106s

>>

>>

>Gemma 4 31b is normally quite good and brief with reasoning

>Put in a system prompt that bans it from all the specific types of slop it spews out

>1722 tokens of reasoning for a 250 token response

I mean it's not that long every time, and it does actually work, but FUCK. This is like 2 generations ago tier 'but wait' spam.

>>

>>

>>

>>

>>108616160

And he still doesn't even realize I don't use rosetta stone and the point I was making is that shit like rosetta stone is a meme and you aren't going to remotely understand a language with it or anything like it or your local ai model that you jack off with or anything. ACTUALLY understanding a 2nd language takes time. Video is probably him thinking he understands a 2nd language.

>>

>>

>>

>>

>>

File: GGNGswf.png (3 MB)

3 MB PNG

>>108616235

>>

>>

File: 1772570118574031.png (1.4 MB)

1.4 MB PNG

>>108616235

>>

>>

>>

>>

>>

>>

>>

>>108616221

I'm actually using the MoE as a draft model which is making this tolerable (~+40% speed ) but it's still just silly how hard it trips it up.

1200 tokens of that 1722 are JUST it arguing with itself and rephrasing the same 'not x, but y' phrase through 8 iterations.

>>108616252

Nigga I had to straight up ban the token for ozone to stop it saying EVERYTHING smells like it because it wouldn't even respect a prompt. And it wants to do x, not y multiple times a response SO BADLY it'll argue with itself for over 1000 tokens to tardwrangle itself.

Gemma 4 31b punches above its weight and is a neat little model, but it is a SLOP FACTORY.

>>

>not x - but y is a gemma thing

this has been the most overused slop construction on every model for the past year, are people saying this using gemma as their first model ever or have they just never tried anything new since the llama 2 days?

>>

File: gg.jpg (8.3 KB)

8.3 KB JPG

>>108616293

>>

>>108616270

>>108616273

>>108616282

>>108616305

wtf are you talking about? literally 0 issues here lmao gotta be shills or something

>>

>>

File: 1760617360450497.png (128.6 KB)

128.6 KB PNG

>gemma completely lacks slop

lmao

>>

Unfortunately the whole 3.6 kind of seem to be that, but for OpenClaw rather than benchmarks themselves. 3.6 Plus is a huge downgrade on general chat purpose that does not involve agentic loop (it has very weird formatting and tendency to insert eos too early before completing the instruction; 3.5 does not do that).

>>

>>

>>108616221

Did you put LOW thinking in the sysprompt?

>>108616322

Nice, what tool is that?

>>

>>

>>

>>

File: 1768042816203833.webm (135.6 KB)

135.6 KB WEBM

>>108616309

I will do whatever I want.

>>108616322

So just ban the tokens?

>>

>>

>>

File: joker i know this one.jpg (63.9 KB)

63.9 KB JPG

>>108616259

>>

File: file.png (113.9 KB)

113.9 KB PNG

>>108616343

>eqbench slop profile

nice didn't know about that page

also... ohnonono gemma bros... it's not looking good

>>

File: no.gif (1.2 MB)

1.2 MB GIF

>>108616333

Help! u/yoracale and u/danielhanchen

>>108616349

picrel

>>

>>

>>

>>

>>

>>

>>

>>108616235

>https://huggingface.co/Qwen/Qwen3.6-122B-A10B

nigger

>>

>>

File: file.png (60.3 KB)

60.3 KB PNG

>>108616356

I knew k2 0905 was special

>>

>>

>>

>>

>>

>>

>>

>>108616250

>>108616253

>>108616259

>>108616373

>>108616381

Sorry anons I just wanted to put the links down so I could easily check when they upload. Didn't mean to mislead you.

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>108615573

>OSR2 stroker

>https://osr.wiki/books/osr2/page/overview

Coomers.... I kneel...

>>

>>

>>

>>

>>

>>

>>

>>108616449

>>108616496

Not to mention she's natively 4-bit. Kimi's smaller than other huge models despite having the highest raw param count for that alone.

>>

>>

>>

>>

>>108616544

>>108616530

Most spam on Linkedin is AI generated or at least edited, you just haven't paid that much attention to it.

>>

>>

>>

>>

>>

>>108616554

>Most spam on Linkedin is AI generated or at least edited, you just haven't paid that much attention to it.

i literaly told you that i agree.

linked in is indeed shit.

yes it's spammed with ai slop.

though to be fair, even before llm's linked in was soulless, now it's just worse.