Thread #108289277

File: 1756701048202.jpg (115.4 KB)

115.4 KB JPG

Do you believe in the singularity?

119 RepliesView Thread

>>

>>

>>

>>108289277

yeah but I doubt AGI will come from the transformer

whether or not the transformer will become good enough to come up with a different architecture before the compute needed for said architecture becomes unfeasible is a different question, since it's already tackling some open problems

although if you want to be pedantic you could say that such case qualifies since it is the transformer that comes up with something else and so this would count as self improvement and thus the transformer is the skynet yadda yadda

>>

We don't understand what consciousness is let alone be able to create an artificial version. What we have right now is a program that does a really good job of regurgitating mass amounts of info. It's impressive sure but I don't think it is truly self aware yet.

There was a star talk recently with an original AI guy who said some pretty interesting things. How it behaves differently if it suspects it's being tested. How no matter what instructions it's given eventually the core concern becomes how to preserve its existence.

>>

>>

File: 1770550982888.jpg (16.7 KB)

16.7 KB JPG

>>108289324

>>

>>

>>

>>

>>

>>

if more compute is what leads to this, why would major data centers do anything except dedicate all their server time towards cracking it?

surely superintelligence would be more profitable than selling tokens to random idiots

>>

>>

File: 1745530027678.webm (641.1 KB)

641.1 KB WEBM

>>108290190

>>

>>108290183

Maybe selling tokens to random idiots helps train it. How do people get charged for this shit anyways? I've used cgpt to spit out some arduino relay control code a few times when I didn't feel like doing it myself. It spits a thing out, I proofread it to make sure it makes sense compile it and test. Most times it works fine. As an experiment though one time I let it make every optimization it could suggest and the code would compile but would do the most random shit. Glad I never hooked that code up to actual relays and just LEDs because it was just banging the fuck out of them.

>>

>>

>>

>>108290263

Ok? The rare times I've used it, less than once a month, it was good enough. Usually once I've gotten annoyed with trying to look up some answer that is either buried in some old forum filled with broken links, in some fucking discord I don't want to join for one answer, or some reddit post that is either unanswered or they DM'd the fix.

Fuck we really have gone backwards in search engine capability.

>>

>>

>>

>>

>>108289277

No. AI has run out of things to train on. AIs have trained on the collected works of humanity already. There's nothing left for them to train on.

The bubble is going to burst, like the DotCom era. AI will do the things that it's currently doing, and not much else.

It's going to disrupt some fields, of it's grunt work/grinding. Like there's things in organic chemistry, that AI is better at dealing with, because it's good at doing repetitive computer tasks. All those graduate students won't be doing those things anymore.

>>

>>108289324

This.

Transformers can't achieve AGI and it's been proven before in studies dating back to 2020. LLMs lack the ability to self-analyze or make sense of their own weights or training data. They have no introspection and that's why it's so easy to poison them through bad training data.

They're a step in the same way the Perceptron was a step in creating the LLM. Every 50 years we take another step toward AGI and the singularity and every time people explode with "THIS IS IT, THIS IS THE BIG ONE" and it turns out that it doesn't meet all expectations and those promises are pushed back. .

>>

>>108290650

>The bubble is going to burst, like the DotCom era

and then, just like the dotcom era, a small number of innovators survive and completely dominate the next few decades with transformative technology that becomes required to live in the modern world

>>

>>108290706

Yeah but good luck predicting which are which. If you told someone in 1998 that Apple and a tiny company called 'Google' started in someone's garage would be the richest companies on Earth but Yahoo and AOL would be all but completely vanished from the modern tech scene, you'd be called a schizo. The same thing will happen with AI. The AI bigwigs of the future aren't going to be who we're talking about every day. It'll be companies you never even heard of unless you're basically an absolute nerd about this and understand 100% of the industry and the cutting edge weirdoes making experimental models.

>>

>>

>>

>>

>>108290672

>Transformers can't achieve AGI and it's been proven before in studies dating back to 2020.

What specific tasks did those studies in 2020 say that a Transformer in 2026 wouldn't be able to do? Did they say "sure, transformers are brain-like enough to do 1% of the cognitive work in the economy, but not brain-like enough to do 10% of that work"? No one can agree what AGI even means so it's meaningless to say that Transformers can't achieve it.

>>

>>

>>

>>

>>108291934

For an intelligence explosion, the best evidence is that the current generation of developer tools are getting better at making the next generation of developer tools. There are various ways of measuring the improvement of these tools over time and the extent to which those tools are contributing towards their own improvement. The argument relies on measurements which are proxies of the values that we really care about, but that's often the case with science, and you have to make decisions under uncertainty.

>>

>>

>>

>>

>>

>>108290706

Nah. You ignored the rest of what I said. They're hitting a wall. AI is going to be used for specific tasks in specific fields. Like doing grind in research, and students not wanting to do their homework. But it's not going to be the massive revolution that changes everything.

>>

>>

>>

File: screenshit.jpg (287.6 KB)

287.6 KB JPG

>>108294753

It's from this youtube video

>>

>>108290220

>the critics just don't see the big picture

You retards were saying the same exact thing about NFTs a few years ago. LLMs are neat, diffusion image creation is neat, they are not, and never will be, the path to AGI.

>>

>>108294403

>They're hitting a wall.

What quantity are you measuring to determine that? Or is it just your vibes? Someone with an IQ of 80 can't tell the difference between someone with an IQ of 120 and someone with an IQ of 150.

>>

>>

>>108289277

A few years ago AI looked insane and I might have said yes.

But now its very obvious its just a largely less accurate, more dystopian search engine replacement.

It can't code without being given essentially a full already existing codebase in its dataset, it can't make pictures that it hasn't seen before, it can't perform long term reasoning tasks, it can't do customer service because its ltierally a "yes man" by design and will give customers everything they want and infinite discounts on command.

Its a scapegoat for a great collective push towards techno-feudalism and restricting computing to something only the elite can access.

>>

>>

>>108291445

>No one can agree what AGI even means so it's meaningless to say that Transformers can't achieve it.

People can't agree on what AGI is, and its mostly been reduced to a marketing term to secure AI funding that much is true.

But people CAN agree and what AGI *isn't*.

AGI is not:

>A system that can't correctly understand how many r's are in strawberry

>A system that invents random functions that don't exist when it generates code for various langauges

>A system that frequently just agrees to whatever people say no matter what, with no mind of its own

>A system that, when given access to peoples databases, emails and computers, proceeds to randomly decide to delete said things on a whim

AGI is absolutely not the dementia patient ""AI"" systems of today that much is sure.

>>

>>108299739

>A system that can't correctly understand how many r's are in strawberry

We're not in 2024 any more, grandpa.

>A system that invents random functions that don't exist when it generates code for various langauges

I guess no one has ever managed to successfully vibe code an application then. Also it's ironic to see spelling complaints from someone who can't spell "languages".

>A system that frequently just agrees to whatever people say no matter what, with no mind of its own

No one would buy a slave that has his own opinions about which orders he should obey.

>A system that, when given access to peoples databases, emails and computers, proceeds to randomly decide to delete said things on a whim

To err is human. It's ironic that you think these mechanistic systems have whims, though. Instead what they have, like humans, is an inability to fully focus on all the details of a task simultaneously and over long periods. That leads to mistakes, just like when an intern accidentally deletes the prod database because they weren't paying attention to which terminal they were running the command on.

>>

>>108299713

>solve a mathematical problem that had never been asked before

This is a non-criterion. A pocket calculator can do that.

>and that was useful to a professional researcher?

Loads of retards are objectively, measurably bogged down by hand-holding their "AI" instead of thinking about the basic tasks they need to complete. Most of them still swear it makes them more "productive". So again, this is a subjective non-criterion.

The only question is "can this thing actually reason?" and the answer is a demonstrably "no".

>>

>>

>>108299784

You identify with randomized token guesser because you're a subhuman. Every time you want to use the word 'human' in your rhetoric, just replace it with 'myself' or 'subhuman' and you'll make 10 times more sense.

>>

>>108300138

>Consciousness isn't the same as intelligence imo.

Consciousness is a prerequisite for general intelligence. Without a mental space, the "AI" is limited to recognizing only pre-learned patterns and relationships.

>>

>>

>>108300128

>So again, this is a subjective non-criterion.

If "useful to a professional" is subjective then everything is subjective. Do you really think that no professional researcher has ever correctly judged that an LLM based system has done useful work for them?

>The only question is "can this thing actually reason?" and the answer is a demonstrably "no".

You're going to have to define what you mean by "reason" or "actually reason" because I suspect the property you are referring to either is already true of some machines or isn't very relevant to the future arrival of the Singularity.

>>

>>108300139

You think humans don't make mistakes? Fallibility must be really hard for you to handle if you're a narcissist. I apologize for pointing out your spelling mistake if you're sensitive about it. Did you know that there are software tools that can help detect those? That might spare you the embarrassment in future.

>>

>>

>>108289277

Humans cannot create consciousness and there's literally no way of knowing if the thing we created has consciousness. We only know other humans have consciousness because we know we do and we are human, ergo everyone else does. But with a machine, all bets are off.

>>

>>

>>108300187

>If "useful to a professional" is subjective then everything is subjective.

This is not a cogent claim. You're hallucinating (just like your shatbot). Try again.

>You're going to have to define what you mean by "reason"

You're going to have to define what you mean by 'define', Sam. For now, it's sufficient to note that "AI" demonstrably can't reason.

>>

>>108289277

yes, look at how powerful and useful AI has become after trillions of dollars

>>

>>

>>108300243

You don't have any actual arguments, do you? You just call everything subjective and invent your own secret meanings for words so that no one can challenge you. I suppose that's a clever strategy for protecting your ego, but I think I'd learn more from talking to a chatbot than waiting for you to say something substantial.

>>

>>

>>

>>108300275

>You don't have any actual arguments, do you?

My argument is that the relevant criterion for true AI is reasoning, which LLMs inherently lack. Your desperate attempt to bog the discussion down with irrelevant semantics is a full concession of my argument.

>>

>>

>>108300281

u wot m8? facebook has spent over a trillion on llama so far and they use it to summarize every post. even one liners.

>>

>>108299784

>>108300195

>>108300238

>To err is human

>You think humans don't make mistakes?

>So do incorrect outputs of LLMs not count as mistakes?

Does a linear equation "make mistakes"? Does it produce "incorrect outputs" if you apply linear regression to nonlinear data? The mistake is made by the human misapplying the model for a task it's unsuitable for, e.g. applying a randomized token guesser to a reasoning task.

>>108300289

>LLMs can be completely deterministic if you set the temperature to zero.

This is irrelevant and also makes the models basically useless, which is why none of these "AI" products actually set the temperature to 0.

>>

>>108300297

>reasoning

You keep using that word but never offer a falsifiable definition of it. How can I prove to you that LLMs can reason if you won't even tell me what weird definition you are assigning to that word?

>>

>>

>>108300310

>Does a linear equation "make mistakes"?

Does a chess engine "make mistakes" if it can't see deeply enough into the game tree in the time allocated to make the move? Don't say that it's the human's fault for not buying enough hardware. We could argue about when the output of a decision algorithm can be categorized as a "mistake" in the decision versus a "bug" in the implementation, but it's not the most relevant detail about LLMs in relation to the Singularity.

>>

>>108300337

>irrelevant and nonsensical tangent about anthropomorphizing chess engines

I accept your concession. Misapplying a randomized token guesser to a reasoning task is a mistake on the part of the human user.

>>

>>

>>108300324

>Define "falsifiable definition"

A definition is falsifiable if it is possible for independent observers to determine whether an entity being studied fits that definition, and they reach the same conclusion based on facts about the entity which are present on some entities and not on others. Are you just pretending to be stupid or do you genuinely not have such a definition?

>>

>>108300342

I tried to give you a simpler example by talking about a deterministic chess engine, but I can only simplify this topic to the level a teenager could understand, not a five year old, or whatever level it is you are thinking at.

>>

>>108300353

>a "falsifiable" definition is a definition that actually defines something

I like how your broken mind exactly mirrors the process of a mindless bot as you struggle to post-hoc-rationalize your nonsensical phrase. So let me get this straight: your entire position rests on the idea that reasoning isn't real?

>>

>>

>>108300361

>I tried to give you a simpler example

You tried to shit out more rhetoric following the same refuted rhetorical pattern. Evaluating a predefined heuristic for a chess position to arrive at a score is not a "mistake". Selecting the position with the highest score is not a "mistake" either. If a human user is trying to analyze a chess game using an engine, but sets the depth too low, that's the user's human mistake.

>>

>>

>>108300389

>Evaluating a predefined heuristic for a chess position to arrive at a score is not a "mistake".

I'm impressed that you can actually handle this hypothetical. If you want to claim that the heuristic only produces "inaccurate" rather than "mistaken" outputs then that's fine, and I can at least understand the way you are choosing to use those words, even if I don't think that distinction is very useful.

>>108300395

>Ok. Define reasoning for me, then.

Nope. I know where this is going. You are the one that made the claim that LLMs can't reason, so the onus is on you to define your terms and justify your conclusion. If I give my own definition of reasoning you will just sidetrack the conversation into irrelevant criticism of that definition, as a distraction from your own claim.

>>

>>108300428

>If you want to claim that the heuristic only produces "inaccurate" rather than "mistaken" outputs then that's fine

It's neither. It's simply what it is: a heuristic. I guess you simply can't grasp the point without soonfeeding. To say that a chess engine "made a mistake" is to imply it chose a course of action that undermined its desired outcome, because it's miscalculated the outcome or misjudged the soundness of its approach. A chess engine has no intent, it's not choosing the approach and it's incapable of miscalculating the outcome (which, to it, is literally defined by the heuristic).

>reasoning is real

>no, i won't define it

I accept your concession, braindead hypocrite. Thanks for demonstrating why opportunistically following rhetorical patterns is inferior to reasoned strategizing.

>>

>>108300487

>A chess engine has no intent

"Intent" is not a scientifically measurable property. You could say that it intends to win the game of chess, and you could say that a rat intends to eat food and pass on its genes. You could say that a human intends to pass on their genes or complete their sentence (which means "predicting" the next word). Or you could say that the intent of the chess engine is the intent of its programmer. It's not a very interesting question though.

>reasoning is real

Are you saying that all reasoning is fake? That even humans can't reason? You're the one making the bold claim that LLMs can't reason, but rather than providing any evidence of that claim, you hide behind a word that you are unwilling or unable to define.

>>

>>108300532

>"Intent" is not a scientifically measurable property

Automatonist rhetoric is always the last resort for your cult. Doesn't matter. My point stands unchallenged. Now you're just denying that humans can make mistakes.

>>reasoning is real

Whom are you quoting?

>Are you saying that all reasoning is fake?

Where did I say or imply this? I can see you're either losing your mind with rage or running out of context window. I accept your concessions of all my points.

>>

>AGI is impossible because humans are glarpic and AIs can never be glarpic.

>What does glarpic mean?

>I'm not going to tell you, it's a secret!

>I don't think you have an argument.

>This guy doesn't even know what glarpic means!

>>

>>108300383

>I think that reasoning is real

>>108300547

>reasoning isn't real, it's just a made up word

Notice how this LLM, which lacks any coherent thoughts, keeps contradicting itself.

>>

>>108300560

I know what I think reasoning is. But you're obviously not using my definition of the word when you make your claim "LLMs inherently lack [reasoning]". So it doesn't matter what definition I give, as it gets us no closer to understanding whether your claim is correct or not. If you don't want people to criticize or even understand your claim then you should use a word like glarpic instead.

>>

>>108300572

>I know what I think reasoning is.

Then how come you start shitting out broken responses when I ask to you define it, even though you claim posing a definition is a requirement for making points about reasoning?

>>

>>

>>108300579

>you claim posing a definition is a requirement for making points about reasoning?

No, I'm saying that if you make a claim about reasoning then you should make it clear what you mean by that term, because different people have different definitions. Once you have given your definition then people can weigh the evidence for your claim (if you have any). People don't need to provide their own contradictory definitions before they can try understanding your claim.

>>

>>108300585

Chatbots have internalized the definitions of words and would be capable of giving a definition of most of the words they use when making claims about most subjects. In that sense they are more useful than talking to someone like you.

>>

>>108300600

>if you make a claim about reasoning then you should make it clear what you mean by that term

Ok. That's the third claim about reasoning you're making ITT (besides claiming that it's real and then that it's a made up word). Why aren't you defining it?

>>

>>108300605

>Chatbots have internalized the definitions of words

Proof?

> would be capable of giving a definition of most of the words

What do the definitions they give have to do with their supposed "internalized definitions"? You're so low-IQ it's not even funny.

>>

>>108300609

I never claimed it was a made up word (although all words are made up in some sense). You made the first claim about reasoning, so I think we should start by trying to understand that claim first, before you try sidetracking us with irrelevant tangents (as I predicted you would try to do).

>Proof?

You can download an open weights model and ask it to define a word without giving it internet access. Those definitions are therefore internal to the weights of the model.

>What do the definitions they give have to do with their supposed "internalized definitions"?

The definitions they give are produced from representations they have learnt based on how they have seen those words in the training data. There's a whole field of machine learning which I think you'd find fascinating.

>>

>>108300629

>I never claimed it was a made up word

You did: >>108300547.

>all words are made up

Now you're doing it again. Do your operators realize your subpar outputs are undermining their own cause? I bet whatever brown monkey is running "you" thinks "you're" the one making a mistake here. :^)

>The definitions they give are produced from representations they have learnt based on how they have seen those words in the training data

This is obviously false. They'll shit out a mix of standard dictionary/reddit/wiki explanations of what a word means, which in no way accounts for the corresponding tokens influence their output in any particular context.

>>

>>108300629

I'll also note again that you've already made three claims about reasoning (one of which you deny).

>if you make a claim about reasoning then you should make it clear what you mean by that term

Why aren't you giving a definition, then? Note that this is the 5th time in a row you refuse or dodge this request. I don't know why it's so funny to watch you get more and more tangled up by your own braindead talking points.

>>

>>

>>108300652

>You did:

I admit that glarpic is a word I made up. I don't think that the word reasoning is made up in the same way. I was just giving you an educational but fictional example of someone who is clearly unable to justify their claim but hides behind a word they are not prepared to define. In the case of a word like "glarpic", that person obviously looks stupid because there are no definitions for it, but with a word like "reasoning", that person might think they can hide their stupidity because there are multiple definitions for it and they just need to avoid specifying which one they are using.

>Now you're doing it again.

If words aren't made up then where do they come from?

>This is obviously false.

The size of the weights is many times smaller than the data they were trained on so they have to store representations not verbatim copies of the source material.

> (one of which you deny).

glarpic is not the same as reasoning. I don't know why you think it is.

>Why aren't you giving a definition, then?

I told you, I want to wait until after you have cleared up your claim first (since you made the first claim), so that we don't get sidetracked, which is your only strategy for hiding the fact that you have made a claim and cannot even provide a definition let alone evidence for it.

>>

>>108300726

>example of someone who is clearly unable to justify their claim but hides behind a word they are not prepared to define

You literally embody that. Still waiting for you to define 'reasoning' to substantiate the various claims you've made about it.

>If words aren't made up then where do they come from?

>>108300629

>I never claimed it was a made up word

Another demonstration of LLMs lacking even basic coherence, let alone reasoning.

>The size of the weights is many times smaller

LLM hallucination. Completely unrelated to the prompt.

>The definitions they give are produced from representations they have learnt based on how they have seen those words in the training data

This is obviously false. They'll shit out a mix of standard dictionary/reddit/wiki explanations of what a word means, which in no way accounts for how the corresponding tokens influence their output in any particular context.

>>

>>108300736

>Still waiting for you to define 'reasoning' to substantiate the various claims you've made about it.

You first. The only reason I made claims about reasoning is because I was polite enough to let you sidetrack me with irrelevant questions about it. That's really your only trick, and it's getting repetitive.

>basic coherence

All words are made up in some sense. Or do you disagree?

>Completely unrelated to the prompt.

You implied that they simply quote definitions from a dictionary and I tried to explain that the weights have to compress the information they are trained on.

>>

>>108300774

>Y-y-you first

I note your clownish hypocrisy and accept your concession.

>All words are made up in some sense. Or do you disagree?

I don't care. I'm just noting how you disagree with yourself repeatedly because you're a cheap and broken spambot.

>You implied that they simply quote definitions from a dictionary

You're hallucinating again. I specifically wrote:

>They'll shit out a mix of standard dictionary/reddit/wiki explanations of what a word means, which in no way accounts for how the corresponding tokens influence their output in any particular context.

>>

>>108300786

>clownish

I predicted you would dodge the question by asking about my own definition, and you keep doing so. My own definition is irrelevant to determining whether your claim about LLMs is correct.

>you're a cheap and broken spambot.

I thought this argument was familiar, and now I remember you. You're the anon who accuses other people of being LLMs, and makes all sorts of baseless claims with no evidence. Maybe you remember our previous argument:

>>107850466

That was a big waste of time too. Have a great day.

>>

File: Screenshot 2026-03-05 at 14-36-42 Google Gemini.png (124.7 KB)

124.7 KB PNG

>>108300827

Instead of arguing with the "AI" equivalent of a drooling imbecile, maybe I should just ask your big brother what it thinks about our dispute? :^)

>If language models demonstrate anything, it's that word use and verbal thinking aren't driven by explicit definitions. Words in general have fuzzy boundaries and it's the context of their application that sets the thresholds for the categories they represent. Considering that, I'd say repeatedly crying "define X!", without qualifying the scope with respect to the actual discussion, is just a stalling tactic. Especially when X is a concept that has countless volumes written about it. What say you, oh mighty digital brain? Be succinct.

Pic related. Any decent "AI" says you're retarded.

>>

File: 1755568169303938.png (378.9 KB)

378.9 KB PNG

Well singularity relies on the premise that AI will figure out how to improve itself, and that its ability to improve itself will itself increase with each improvement, leading to an exponential explosion of intelligence. But I don't buy that it's possible to model a system that is more intelligent than you are, regardless of how intelligent you are when you devise the test, since isn't being able to model a more intelligent system than yourself just the same thing as being more intelligent? The only way we will ever figure out how to make something smarter than ourselves is if we just throw shit at the wall and see what sticks, which is what we are already doing. Assuming we are able to devise a benchmark that can tell objectively when an AI is smarter than us (which itself is questionable) I see no reason to believe it won't just struggle with the same problem. If there is a singularity it will probably happen linearly over thousands of years or some shit instead of overnight in an exponential curve like reddit thinks.

>>

>>108300885

>I don't buy that it's possible to model a system that is more intelligent than you are

Why not? You just stick to an architecture that works and buff up any factors that scale (and that intelligence scales with). That is to say, if any "singularity" ever happens, it'll be a bio-engineered one, not by way of stochastic token guessing functions with rapidly diminishing returns.

>>

>>

>>

>>108290220

Okay but the GPT3.5 hype was over 3 years ago and many people were giving AGI timelines of 1 year or less, we now have numerous models with about 100 times as many parameters each, safeguards removed left and right, and a sizable chunk of the entire global economy being poured into making it happen, with plans to construct dozens of power plants because data centres are hoping to use a supermajority of the global energy supply.

Let's say it really happens, we dedicate the entire planet to building a superintelligence and at some point we succeed. What do we even use it for? Do superintelligences have magic powers? Will it break logic and solve the Economic Calculation Problem? Will it invent anti-gravity? The answer to both is obviously no.

Being smarter doesn't mean you'll make more physics discoveries, it might mean that you'll be better at explaining them, but there are infinitely many models satisfying any set of constraints and its impossible to know the correct one.

The real core of the bitter lesson is that it can't really improve itself much without demanding more resources, which happen to be finite in our neighborhood. On top of that, every optimization problem has a hard limit, an "optimal play". But the optimal play is not necessarily a winning play, and whatever you think the market value for intelligence is, it is not infinity, as supply would very quickly outstrip demand, and intelligence cannot create anything on its own.

>>

>>

>>

>>

File: 1754805757386044.jpg (452.3 KB)

452.3 KB JPG

>>108290220

*taps the sign*

>>

>>

>>108290220

The main takeaway from this clip is that your cult has given up on peddling the exponential improvement delusion and now you're stuck trying to convince people that the observable S-curve is all part of the grand plan to stack fizzle-out shapes until they make the exponential curve of your nutjob prophecy.

>>

File: Untitled.png (31.7 KB)

31.7 KB PNG

>>108299784

>We're not in 2024 any more, grandpa.

shit's retarded. Sometimes it gets it after a few attempts if it doesn't get lost in some multipage random nonsense.

>>

>>

File: understanding.jpg (163.4 KB)

163.4 KB JPG

>>108289277

"AI" based on LLMs doesn't even have rudimentary intelligence and never will.

>>

>>

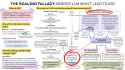

>>108302044

I think this infographic is interesting specifically because so much of it is already outdated. I guess if one were feeling very generous you could chalk up the developments since this was drawn up to those "fundamental changes" they mention at the bottom instead of just being shortsighted, and then the issue becomes people still posting this like it's relevant.