Thread #108293080

File: Screenshot_20260304_204546_Kiwi Browser.jpg (925.8 KB)

925.8 KB JPG

AGI is not possible through LLMs.

59 RepliesView Thread

>>

>>

>>

>>108293080

1) the insightful part of that post, is current AIs networks are "incredibly simplistic" but this has been stated since the boom by even OpenAI's founder/CEO.

To paraphrase him, the "break through" was getting transistors cheap enough, they realized they could simply stack more and more to get better results.

The algorithm underlining it, is very simple, and similar to autocorrect.

2) what is intelligence?

>>

>>

>>108293155

>>108293174

>>108293225

>>108293243

I don't care about AGI. I just want coders to be replaced and cause mass unemployment.

>>

>>

>>

>>

>>

>>

>>

>>

File: 1761704186870.jpg (1.2 MB)

1.2 MB JPG

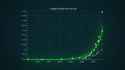

It's happening

>>

>>108293080

ChatGPT said most of the claims here are overly simplistic and misleading, but also they do have a point on scaling alone not being enough

I wonder how many /g/ anons know what a MC is and how they are comparable to LLMs

>>

>>108293080

Agreed, they are certainly interesting and useful tools, but "they" are not intelligence.

Just pattern matchers with extremely large datasets.

LLMs basically have huge maps on how to react to a lot of situations and how to respond to them, but because the datasets are so incredibly large and the data is compressed (or may be of bad quality) so that when extracted there are errors in the response.

Modern transformers LLMs can be industry disruptive tools for automation but all the hype about AI or that "it's alive" or AGI by 2030 is yet another jewish grift to get money.

Chinamen know this intrinsically and it's why they release everything to the public, which makes the kikes mad that they can't have a monopoly on the tool and the narrative.

>>

File: 1767925483174.webm (641.1 KB)

641.1 KB WEBM

>>108293080

Science, retard

>>

>>

>>

>>

File: 106004479_p1.jpg (1.8 MB)

1.8 MB JPG

>>108293080

>AGI is not possible through LLMs.

Wow it's been fucking obvious since the beginning though? Only paid shills (pretend to) believe otherwise.

Machine learning in general is a dead end, the only reason it's been pushed so much is because megacorporations like Google, Amazon and Microsoft had all this data they (illegally) acquired and they had to monetize somehow, furthermore they own the majority of datacenters so they can make double money from this, this was the objective all along make money for burger megacorps.

>>

>>108293080

This is so fuckin obvious though. Everyone should realize this immediately within literally one single millisecond of learning the basic idea of how LLMs work. They are still really useful tech as content aggregators and generic content generators. But in terms of AGI, they will only be able to exist as a sort of I/O layer of some other type of 'intelligence', which I don't know of any great leads on yet.

>>

>>108293760

Holy fuck this is a load of horseshit.

>But it can spit out more refined garbage! Over a longer CONTEXT WINDOW!!!

I love LLMs I think they are great, but they are inherently flawed in ways that can never be fixed even with infinite scaling and refinement. They serve a certain purpose, and that purpose is not AGI.

>>

>>

>>

>>108293080

Everyone knows that but the "AI bros". In fact, most AI bubble companies are buying time with the agentic AI and its implementation with robotics so they have a chance to actually implement world models.

>>

>>

>>108295335

Incorrect. Software Architects do all the real work. Programmers do the grunt labor. Architects may look like they are doing nothing. This is incorrect, however. They just spend much of their time in their mind, considering the best approach to the problems at hand. It takes a genius to be an Architect.

>>

>>

File: 1769223758282970.mp4 (3.3 MB)

3.3 MB MP4

Hype helps big tech companies consolidate their position as absolute providers of every computing service conceivable They want to create a reality in which private home processing power is impractical or useless for ordinary people.

With the integration of smartphones into people's daily lives (an abstract black box device over which people have the smallest control possible), people have become accustomed to giving up their sovereignty over the electronic devices they own as private property.

I believe the last line of defense for private home ownership is video games.

If people surrender their gaming rights is over.

>>

>>

>>

>>108297816

>I believe the last line of defense for private home ownership is video games.

The ones who gave up any ownership rights at the first opportunity, in the form of Steam? Those retards? I guess we're "cooked fr fr" then.

>>

>>

>>108297224

Even most of the AI industry itself believes you need more than just LLMs to reach AGI.

most researchers seem to think you'll need a number of specialized models working together to form an AGI-like model, likely with specific models for reasoning, symbol manipulation, and cognitive models as well as mass data sets of sanitized knowledge to learn from.

It's why Gemini has so many different models they're constantly tweaking and updating independently. Models for research, models for coding, models for image generation, models for music generation, etc.

They're internally and with universities even working on models being trained on quantum data using (usually simulated) quantum hardware.

>>

File: reverie_alphonse_mucha.jpg (555.1 KB)

555.1 KB JPG

>>108293080

Not really scientifically accurate, though I do think the claim is true. Problem is that [LLM + other things] may be sufficient for something like AGI, just as LLMs alone were not sufficient to solve ARC-AGI-1 but LLMs + test-time compute are.

To actually critique what he said: Markov processes are irrelevant, any non-Markovian process can be represented by a Markov process with special history states. So even if LLMs could only simulate Markov processes (dubious claim, not a hard thing to tack on), it wouldn't matter.

Criticizing ANNs is fair, but it's dubious how much biologically plausible structure is needed. RNNs based on perceptrons can still represent arbitrary neural ODEs and pretty much all models of actual neurons are neural ODEs (in varying levels of sophistication). Attention can too, in the limit of infinite context, but most big labs are moving to some form of recurrence mixed in anyway.

Motor cortex is probably the worst example for something that works like an ANN too, exactly talking out of his ass. Maybe he meant cerebellum? Still untrue though. Motor cortex is just as sophisticated as any other piece of cortex, except maybe being agranular (which is just cause it receives no primary sensory input).

Either way, I pray for a mini-Winter too so that my pet interest (biologically plausible learning) can save the day.

>>

>>

>>

>>108293327

It's not just more efficient, it's completely different.

The human and animal brain is able to learn with very small data and with very high uncertainty, machine learning collapses if it's training data includes malicious data, humans are used into living in a hostile environment full of lies and hostile adversarial entities.

>>108297386

>>108297910

All alternative "world model" and all specialized models are still big data machine learning slop, llms or diffusion, even the advances stuff like Le Cun world models is a different spin of this.

It's big data machine learning slop all the way down

>quantum data using (usually simulated) quantum hardware

Just add more buzzwords! Quantum agentic AGI copilot in two more weeks!

>>108297816

They want "all interfaces to computing is a chatbot", they hate that we get to talk to the computer with formal languages like programming languages, the goyim must not have an interface that is not filtered through a channel trained on kosher data and that doesn't have (((safeguards))).

>>

>>

>>108298548

The theory of Turing completeness says that anything that is possible to compute can be computed on a Turing machine, you are using a Turing machine right now, which means you could be running AGI right now on your machine theoretically.

The problem is the software not the hardware, and quantum computing has nothing to do with it.

>>

>>

>>

>>

>>108298482

The current state of the art from world models is barebones of course, everything has been pretty much function approximation. Imo we need a more deterministic approach when it comes to the structure, endless data feeding to the machines won't make us advance.

>>

>>

>>

>>108293225

>2

Cognition of the surrounding model context through the combined parsing of both the context by sensors that can filter the raw reality into the locally verified model and a cortex system that can assign the filtered data chunks into the action requested by the cognition driver that started this loop.

>>

>>108298482

>The human and animal brain is able to learn with very small data and with very high uncertainty

The body is super old, and carries most of its functions from the DNA program deployment (hormone wide channels, or heartbeat patterns, for instance).

We share most of our DNA with bananas. most at the protein molecule build and recycle instructions (hence virii). With primates we humans share a bit more. With animals as a group most primitive choices range between fast verification threshold binary choices where the outcome is also fast to verify if it was the correct shortcut pathway or not.

Also related with conversational LLMs, Star Trek NG dealt with molecules as a memory module (for a nav map). In theory you can store "quantum" values as increment decrements from the mean.

>>

>>

>>

>>

>>

>>

>>

File: 1667624223349390.png (41.7 KB)

41.7 KB PNG

>OP being a fucking newfaggot who can't quote

>>

>>