Thread #108295959

File: 1752899592735123.png (149 KB)

149 KB PNG

/lmg/ - a general dedicated to the discussion and development of local language models.

Previous threads: >>108290857

►News

>(03/03) WizardLM publishes "Beyond Length Scaling" GRM paper: https://hf.co/papers/2603.01571

>(03/02) Qwen 3.5 Small Models (2B, 4B) released: https://hf.co/Qwen/Qwen3.5-4B

>(02/26) Qwen 3.5 35B-A3B released, excelling at agentic coding: https://hf.co/Qwen/Qwen3.5-35B-A3B

>(02/24) Introducing the Qwen 3.5 Medium Model Series: https://xcancel.com/Alibaba_Qwen/status/2026339351530188939

>(02/24) Liquid AI releases LFM2-24B-A2B: https://hf.co/LiquidAI/LFM2-24B-A2B

►News Archive: https://rentry.org/lmg-news-archive

►Glossary: https://rentry.org/lmg-glossary

►Links: https://rentry.org/LocalModelsLinks

►Official /lmg/ card: https://files.catbox.moe/cbclyf.png

►Getting Started

https://rentry.org/lmg-lazy-getting-started-guide

https://rentry.org/lmg-build-guides

https://rentry.org/IsolatedLinuxWebService

https://rentry.org/recommended-models

https://rentry.org/samplers

https://rentry.org/MikupadIntroGuide

►Further Learning

https://rentry.org/machine-learning-roadmap

https://rentry.org/llm-training

https://rentry.org/LocalModelsPapers

►Benchmarks

LiveBench: https://livebench.ai

Programming: https://livecodebench.github.io/gso.html

Context Length: https://github.com/adobe-research/NoLiMa

GPUs: https://github.com/XiongjieDai/GPU-Benchmarks-on-LLM-Inference

►Tools

Alpha Calculator: https://desmos.com/calculator/ffngla98yc

GGUF VRAM Calculator: https://hf.co/spaces/NyxKrage/LLM-Model-VRAM-Calculator

Sampler Visualizer: https://artefact2.github.io/llm-sampling

Token Speed Visualizer: https://shir-man.com/tokens-per-second

►Text Gen. UI, Inference Engines

https://github.com/lmg-anon/mikupad

https://github.com/oobabooga/text-generation-webui

https://github.com/LostRuins/koboldcpp

https://github.com/ggerganov/llama.cpp

https://github.com/theroyallab/tabbyAPI

https://github.com/vllm-project/vllm

370 RepliesView Thread

>>

File: really.png (1.6 KB)

1.6 KB PNG

>>

>>

>>

this might sound kinda crazy but do people pool together compute resource across multiple smaller machines e.g NUCs to run LLMs

I bought a bunch of NUCs a few years back because I thought it would be fun but never thought about using them for this

I do have a chunky machine with 32GB ddr5 ram and I intend to buy a gpu for it at some point, probably second hand

I don't really want to spend anymore than I already have

the total ram comes to 64GB

and if I add in my laptops ddr4 ram it comes to 80gb

could I run a general LLM with this much and what do people use to pool it?

Claude mentioned exo.

>>

>>

File: lmg user.jpg (159.7 KB)

159.7 KB JPG

>>108295959

>>

>>108296013

https://github.com/ggml-org/llama.cpp/tree/master/tools/rpc

>>

File: 1742937188633674.png (510 KB)

510 KB PNG

https://arxiv.org/abs/2512.01797

>They solved AI hallucinations

>>

>>

>>

>>

>>

File: you.jpg (193.9 KB)

193.9 KB JPG

>>108296054

>>They solved AI hallucinations

>>

>>

>>

>>

>>

>>

File: 2026-02-20_190043_seed1_00001_.png (1.4 MB)

1.4 MB PNG

>>108198958

Any updates on this?

>>

File: 1772652718333219.png (1.4 MB)

1.4 MB PNG

Just give me the Engrams already

>>

>>

>>

>>

>>

>>

>>

>>

>>108296313

>>108296311

Yes but it’s not awesome last I tried it.

There’s also a visual novel mode as well as a few other ways to rig up your waifu aside from AI Art you care to set them up.

>>

>>

>>

File: british.jpg (221.2 KB)

221.2 KB JPG

>>108296352

here's your authentic british experience

>>

File: 1753003751529458.png (159.9 KB)

159.9 KB PNG

>>108296443

no wonder why Miku doesn't want to deal with them

https://www.youtube.com/watch?v=IzDmMQ7SVPc

>>

File: Is Alibaba retarded?.png (750.7 KB)

750.7 KB PNG

Imagine making such a home run and getting fired anyway.

>>

>>

>>

>>

>>

>>

>>

>>

>>

File: 1750811103430221.jpg (162.6 KB)

162.6 KB JPG

>>

>>

>>

>>

>>108297064

https://huggingface.co/spaces/pliny-the-prompter/obliteratus

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

I don't know why people are surprised about the Qwen drama. Alibaba has many more GPUs at their disposal compared to smaller Chinese teams yet they only train small to medium models and their models aren't noticeably better. This points to mismanagement of resources

>>

>>

>>108297113

>Your model will not refuse and the cooming quality will remain

First, if it stops refusing, NO, it will NOT remain the same. Second, why do people need a to automate this? Isn't half of the fun trying to finetune the models yourself?

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

File: ourhero.png (488.8 KB)

488.8 KB PNG

Will he save open-source?

>>

>>

>>

File: 1762782616143386.png (55.3 KB)

55.3 KB PNG

>>108296467

>>108296977

>>

>>

File: 1766842862628387.png (286.9 KB)

286.9 KB PNG

>>108297365

i said fuck it and am trying it anyways, will post thoughts after

>>

File: 1742570571210357.png (110.6 KB)

110.6 KB PNG

What's he doing wrong?

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

File: 1744149959711839.jpg (199.1 KB)

199.1 KB JPG

>>108297439

>>

File: 1557206102045.jpg (94 KB)

94 KB JPG

>>108297439

He is mentally retarded (IQ below 85), as even a layman can diagnose from his beginning every sentence with an emoji and his reddit spacing while expressing that he has failed to perform the simplest of tasks.

>>

>>

>>

>>

>>

>>

>>

>>

File: 1768664255363023.png (265.3 KB)

265.3 KB PNG

lmao it's so fucking over

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

File: 1759798679242615.png (391.2 KB)

391.2 KB PNG

https://www.reddit.com/r/LocalLLaMA/comments/1rl54v7/d_a_mathematical_ proof_from_an_anonymous_korean/

>>

is there a local program which can be used for voice cloning? 11 is gey and I don't want to give them money just so I can make princess peach recite BWC copy pastas and use bluetooth to play it on my neighbors car stereo the next time he slowly drives down the block blasting his rap music

>>

File: 1770898121858835.png (68.4 KB)

68.4 KB PNG

>linking reddit

Git gone and stay gone

>>

>>

File: 1766250133966068.jpg (337.4 KB)

337.4 KB JPG

>>108295959

>>

File: 1751059461704218.png (961.3 KB)

961.3 KB PNG

We're safe (for now)

>>

>>

>>

>>

>>

>>

>>

>>108298195

Long term China is selling not just ai but a whole technology stack. They want nations to use Chinese chips, phones, ram, ai, social credit, etc etc.

The US is also doing something similar basically advanced nation as a kit. Some guy in Africa or other country agrees to partner with one of the giants and they buy the whole kit from either state.

Open source is part of the Chinese plan and a great way to get people to buy in to the Chinese platform.

You see this in smaller scale when you have Intel and AMD vs Nvidia. The smaller players embrace open source while the big player goes closed.

>>

File: speculative decoding.png (552.8 KB)

552.8 KB PNG

►Recent Highlights from the Previous Thread: >>108290857

--Paper: Speculative Speculative Decoding:

>108292842 >108292890 >108293624 >108293853

--Papers:

>108295483 >108295969

--Local LLM coding workflows and integration tools:

>108295899 >108295909 >108295920 >108295978 >108295996 >108296037 >108296144 >108296160 >108296207 >108296410 >108296437 >108296739 >108296788 >108296800 >108297123 >108297193 >108296462 >108296536 >108296568 >108296541 >108296628 >108296644 >108296750 >108296694 >108296787

--Qwen's inefficiency vs MiniMax's distillation strategies:

>108294923 >108294960 >108295008 >108295021 >108295116 >108295156 >108295202 >108295230 >108295251 >108295312 >108295353

--Qwen3.5-27B GGUF quantization performance evaluation:

>108293551 >108293583 >108293897 >108294067 >108294093

--Yuan 3.0 Ultra 1T parameter MoE model announced with skepticism:

>108294663 >108294669 >108294682 >108294704

--Yuan3.0-Ultra MoE model release and skepticism:

>108293837 >108293904 >108294134 >108293917 >108293925

--Nvidia Pascal GPU support ending in AI/ML libraries:

>108293714 >108293994 >108294087 >108294443

--Distributed model inference over slow interconnects deemed impractical:

>108295999 >108296044 >108296072 >108296130 >108296214

--Anthropic overtaking OpenAI in US business AI chat subscriptions:

>108291455 >108291566 >108294506 >108294530 >108294871 >108294970 >108295456

--Mistral Labs announced for experimental community models:

>108293284 >108293312 >108293340 >108293343 >108293360

--Alibaba Qwen team restructuring and resource allocation disputes:

>108293036 >108293041

--Verify-after-edit strategy boosts Qwen3.5 coding benchmark performance:

>108297248 >108297281

--Testing lcpp script with transformers 5 branch for gguf quantization:

>108293341

--Miku (free space):

>108291091 >108291631 >108292815

►Recent Highlight Posts from the Previous Thread: >>108291145

Why?: >>102478518

Enable Links: https://rentry.org/lmg-recap-script

>>

>>

>>

>>

>>

>>

just tricked an eldritch bodystealing entity that (she) would turn into my devoted lover if I cum inside her, and so I did, and she did turn into my eternally devoted wife that takes bodies of other girls to fuck me at my gesture

whew all in a days work

>>

>>

File: file.png (250.5 KB)

250.5 KB PNG

she's not buying it>>108298838

>>

Which of these is best for longer RPs?

https://github.com/unkarelian/timeline-memory

https://github.com/aikohanasaki/SillyTavern-MemoryBooks

https://github.com/qvink/SillyTavern-MessageSummarize

>>

So I got hired by a small startup to build a harness, somehow I was the best candidate I honestly applied just for fun thinking I was going to be rejected, by biggest accomplishments were some diffusion finetunes and comfyui nodes lol, anyways any tips?

>>

>>

>>

>>108298974

Smart context management is very important. If you use thinking models and feed the whole thinking process of every previous request into the model, then you're going to hit high input token counts very quickly (expensive and answers get worse).

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

File: 1750253687269722.png (1.2 MB)

1.2 MB PNG

>>108298564

remember 3/9 is miku day

>>

>>

>>

>>

File: Screenshot 2026-03-05 014421.png (122.6 KB)

122.6 KB PNG

>>108297171

Practically every Chinese LLM is some version of LLaMA.

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>108299135

show me the robots doing acrobatics and martial arts from your country anon.

https://www.youtube.com/watch?v=mUmlv814aJo

also tell me how many FUCKING ARXIVS HAVE FUCKING CHINESE AUTHORS

DUMB FUCK.

>>

>>

File: 1751679404081754.png (31.2 KB)

31.2 KB PNG

Should have used local lol

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

File: 1748409523070593.jpg (55.4 KB)

55.4 KB JPG

>>108299252

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>108299362

>>108299349

two faggot open sores losers!

>>

>>

>>

>>

>>

>>

File: 1763826594163623.jpg (282.2 KB)

282.2 KB JPG

>>108299387

Sure you are

>>

>>108299346

>sillytavern

most of the rube goldberg stuff in there was made to support models that could barely handle 2k context

just use a normal chat frontend, you are not using a llama 2 or mistral finetroon anymore

>>

>>

>>

>>

>>

>>

>>108299399

I don't understand this logic

Yeah you might need not need every feature in ST, but what do other front ends have that ST doesn't? Unless you're far down the minimalism autistic retard rabbithole then what front end is better?

>>

>>

>>

How do I stop my agents from doing this:

Me: Agent make X, Y and Z

Agent: I made them

Me: Can you confirm you made Y?

Agent: (Realizes it didn't make Y just X and Z) Makes Y, and then replies yes I did

I would rather it answers the fucking questions instead of trying to save face.

>>

>>

>>

>>

>>

>>

>>

>>108299453

you sound like the KDE niggers. Ostensibly, KDE offers everything, and can even be turned into a tiler window manager. Realistically, only people with absolutely no taste would use that piece of shit DE.

>>

>>

>>

>>

>>108298228

the alternative is being bought out by some wall street private equity as the sellouts were trying to do with qwen. no surprise the ceo is stepping in when they were trying to pull a fast one on him like that.

>>

>>

>>

>>

>>108299463

I think sillytavern could use being more simplified by default but KDE is really useful (features like HDR or easyish yet advanced window management, etc) and caters to what most people like out of box. If you don't like it that's fine but for most people it's simple enough to use and has everything they want to use out of the box and that's not bad for a DE for most people so long as it doesn't go into the absolute stupid shit windows is doing lately.

>>

File: 1759820090246300.png (7.6 KB)

7.6 KB PNG

you zoomers don't remember how bad it used to be

the days when a 30b was huge and anything above 2k context was amazing

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>108299524

>>108299527

There are programs and stuff that use multimodal llms to constantly scan something and output text constantly which can also be voiced instead of manually putting it in. I've seen it done with translation stuff (gamesentenceminer or luna translate) and stuff like skyrim mods so in theory something do this already exists more or less but I wouldn't know what it is.

>>

>>

>>

>>

>>

>>

>>108299575

again >>108299546 I'm pretty sure it's possible at least in the simplest sense, doesn't mean the robot is going to move accordingly or anything and doesn't mean anyone who knows how is currently investing their time to make it a reality though. Be the change you want to see I guess and learn and stop relying on busy extremely tech literate people to figure it out and mass produce it for you.

>>

>>

File: 1758446804438438.gif (1.2 MB)

1.2 MB GIF

Just in case anyone's curious about why the thread is abnormally terrible, some seamonkey got banned for shitting up /aicg/ a day or two ago, so he's now shitting on our floor until his ban expires and he can go home

>>

>>

>>

>>

>>

>>

File: 1742292827180825.mp4 (2.2 MB)

2.2 MB MP4

>>108299527

>>108299546

>>108299555

>>108299563

SOON

>>

>>

>>

>>108299643

Why indeed gnome sucks ass now days. But KDE is increasingly A default. Let me rephrase that then even though I thought it was obvious what I meant: KDE has things that most people can or do make use of readily available.

>>

>>

>>

>>

>>

>>

>>

>>

File: 1760045837635957.png (40.6 KB)

40.6 KB PNG

>>

>>

>>

>>108299604

>>108299833

I have no idea who you're talking about, it all looks like about the same level of shitposting that happens sometimes.

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>108300034

how new r u?

>>101207663

>I wouldn't recommend koboldcpp.

>>

>>

File: z947ipfiw6ng1.png (674.7 KB)

674.7 KB PNG

>WTF, how can a 4B model be better at coding than a 480B one? What do other 476B parameters do?

wasting params on your stupid rp coom bs is leading to this and qwen's death, hope you're happy...

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

anyone else having issues with llama.cpp+qwen it all worked great and i got up to 170t/s on the 0.8 then suddenly it dropped to 120/130 t/s and the output was just garbage after switching between 0.8B 9B 27B 80B they all started generating garbage is it like corrupting reading stale memory or something

>>

>>

I’m a software engineer who hasn’t gone deep into AI yet :(

That changes now.

I don’t want surface-level knowledge. I want to become expert, strong fundamentals, deep LLM understanding, and the ability to build real AI products and businesses.

If you had 12–16 months to become elite in AI, how would you structure it?

Specifically looking for:

>The right learning roadmap (what to learn first, what to ignore)

>Great communities to join (where serious AI builders hang out)

>Networking spaces (Discords, groups, masterminds, generals, etc.)

>Must-follow YouTube channels / podcasts

>Newsletters or sources to stay updated without drowning in noise

>When to start building vs. focusing on fundamentals

>I’m willing to put in serious work. Not chasing hype, aiming for depth, skill, and long-term mastery.

Would appreciate advice from people already deep in this space

>>

>>

>>108300299

ok i did this before and I know what's going to work. what you need to do is go here https://www.reddit.com/

>>

>>

>>

>>

>>108300284

I had this on ik_llama.cpp with 397b ubergarm q2. Restarting it fix the problem and it hasn't happened again so I don't know. Speed dropped to sub-1t/s, then after restart went back to about 10. I had plenty of spare GPU and system memory, and fallback was disabled.

>>

>>

>>

>>

>>

>>108300299

There are AI PhDs with 10+ published papers all with 1000+ citations that can't even get INTERNSHIPS. There is no upgrade path for a regular software engineer into this field.

We have regulars on /lmg/ that train their own niche models, some even sota that aren't employed in the field.

We get people like you every other week and the answer is always the same, the industry is impenetrable even for domain experts and people making top of the line models. What makes you so special that you believe you can get your foot in the door?

>>

>>108300458

>some even sota that aren't employed in the field

>>108300442

>>

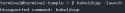

>slot update_slots: id 0 | task 27 | forcing full prompt re-processing due to lack of cache data (likely due to SWA or hybrid/recurrent memory, see https://github.com/ggml-org/llama.cpp/pull/13194#issuecomment-28683430 55)

why does it always happen with Qwen3.5-35B-A3B? --swa-full doesn't do a thing. I'm on the latest version (8208).

>>

>>108300472

Not talking about the LLM finetuners. People like the reinforcement agent guy pushing the limits on AI that plays games on its own or that autist that pushed OCR to its absolute limits so that he could read every hentai doujinshi on the internet.

>>

>>

>>

>>108300499

No they don't. Fuck off bullshitter. Last posts were a couple of weeks ago where they affirmed they didn't work in the AI industry specifically.

Why are you trying to gaslight that software engineer into wasting his life trying to get an AI job when not even PhD top contributors and sota model developers get jobs?

>>

>>

>>

>>

>>108300521

I lurk the thread like everyone else and actually put attention to those 2 because I use the manga translation tool and the game playing one is just cool because the guy is blogging his entire journey from 0 knowledge to where he is now pushing sota. If you read through every thread you know exactly what's going on, fuck off troll.

>>

>>

>>

>>

>>

>>

>>

>>

File: 1772642445742946.jpg (57.6 KB)

57.6 KB JPG

>>108300589

>>108300593

wtf is going on another model i went from 39t/s to 48 t/s

>>

>>108300299

learn from experts

https://www.youtube.com/watch?v=1oS35oWWl28

>>

>>108300625

are you using llama-bench or just looking at tokens per second on first message? because it's going to fall off as it generates longer responses and fills more context or be higher if it just replies with a couple words

>>

>>108300031

you can use kobold's chat ui on llama.cpp:

https://github.com/LostRuins/lite.koboldai.net

it's the only thing of value from kobold anyway

>>

>>108300631

Bernie is such a good guy, I just wish he were more tech-literate. Instead of banning and slowing AI, why not nationalize it? But then again, after being sabotaged last time, there is 0% chance he'll ever get any additional power.

>>

>>

>>

File: 1761654164610191.png (317.5 KB)

317.5 KB PNG

local sisters i don't feel so good

>>

>>

>>

>>

>>108300129

I don't know if it's the fact that I'm using heretic but 27b sucks and repeats and spouts nonsense sooo much. The base model is too censored by default for my use case though and any prompt that worked before it rejects it.

>>

>>

>>

>>

>>

>>

>>

>>

File: file.png (637.4 KB)

637.4 KB PNG

>>108300698

>built for humanoids

>>

>>108300722

and also: the human form isn't any more optimal than something like animal/alien hybrid. Something like boston dynamics's spot dog with an arm protruding from the back thing could operate most human things just fine, and four legged creatures are more stable than humans. Why would anyone think we are the ultimate form? humans are the weakest animal, like, ever. We can't kill/hunt anything with our bare hands/teeth. A fucking raccoon will ruin your day. We aren't even adapted to the bare minimum of survival to most ranges of temperature on earth: without clothes and fire, we freeze to death, or burn under the sun. Humans are not to be imitated.

>>

>>108300722

those cars are mostly designed to transport humanoids to where they need to be to be productive, and robot cars already have even more investment than robot humans so it doesn't support the original complaint

>>

File: dont-fist-android-girls.png (187 KB)

187 KB PNG

>>108300698

Just say you want to put your dick in the robot.

>>

>>108300737

obviously humans are not the ultimate form, but in the short term they're by far the most useful. when we multiply our labor force by 100x with these things we can put them to work building the more efficient world and workers we'll need in the long term

>>

>>

>>

>>

>>

>>

>>

File: file.png (32.4 KB)

32.4 KB PNG

>>108300817

https://docs.axolotl.ai/docs/models/gpt-oss.html

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>108296023

>ASICs for AI when?

alread exxists bro https://chatjimmy.ai/

t. dixie flatline

>>

>>

>>

>>

>>108301481

https://www.shutterstock.com/image-photo/this-beautiful-roundabout-top -view-shot-1135833710

>upload date: 2018

>>

File: 1744155658238443.png (4 MB)

4 MB PNG

>>108301281

>If your vision of a dystopian future included robot monks presiding over ancient rituals, Kyoto University has brought that vision one step closer to reality. A research team from the university, in collaboration with the tech ventures Teraverse and XNOVA, recently unveiled a new AI-integrated robot monk — the Buddharoid — at the Shoren-in temple in Kyoto.

>The Buddharoid is designed to support the Buddhist clergy as Japan’s religious infrastructure faces a steady decline. It utilizes a system called BuddhaBot-Plus, a specialized generative AI derived from OpenAI’s ChatGPT that has been trained extensively on sacred Buddhist scriptures. This allows the robot to provide spiritual guidance on personal and social issues, like a real monk would.

>Beyond its conversational capabilities, the Buddharoid uses hardware — developed by China’s Unitree Robotics — to mimic the specific movements of a monk, including a slow gait, bowing and the gassho gesture of placing palms together in prayer.

>>

>>

File: 39642.png (59.5 KB)

59.5 KB PNG

>>108302087

close enough champ let's go

also why is OP an ultrafag who needs reminding who the queen of this site is?