Thread #108567168

File: 1745859137505826.png (1 MB)

1 MB PNG

A general for vibe coding, coding agents, AI IDEs, browser builders, MCP, and shipping prototypes with LLMs.

►What is vibe coding?

https://x.com/karpathy/status/1886192184808149383

https://simonwillison.net/2025/Mar/19/vibe-coding/

https://simonwillison.net/2025/Mar/11/using-llms-for-code/

►Prompting / context / skills

https://docs.cline.bot/customization/cline-rules

https://docs.replit.com/tutorials/agent-skills

https://docs.github.com/en/copilot/tutorials/spark/prompt-tips

►Editors / terminal agents / coding agents

https://cursor.com/docs

https://docs.windsurf.com/getstarted/overview

https://code.claude.com/docs/en/overview

https://aider.chat/docs/

https://docs.cline.bot/home

https://docs.roocode.com/

https://geminicli.com/docs/

https://docs.github.com/en/copilot/how-tos/use-copilot-agents/coding-a gent

►Browser builders / hosted vibe tools

https://bolt.new/

https://support.bolt.new/

https://docs.lovable.dev/introduction/welcome

https://replit.com/

https://firebase.google.com/docs/studio

https://docs.github.com/en/copilot/tutorials/spark

https://v0.app/docs/faqs

►Open / local / self-hosted

https://github.com/OpenHands/OpenHands

https://github.com/QwenLM/qwen-code

https://github.com/QwenLM/Qwen3-Coder

►MCP / infra / deployment

https://modelcontextprotocol.io/docs/getting-started/intro

https://modelcontextprotocol.io/examples

https://vercel.com/docs

►Benchmarks / rankings

https://aider.chat/docs/leaderboards/

https://www.swebench.com/

https://swe-bench-live.github.io/

https://livecodebench.github.io/

https://livecodebench.github.io/gso.html

https://www.tbench.ai/leaderboard/terminal-bench/2.0

https://openrouter.ai/rankings

https://openrouter.ai/collections/programming

►Previous thread

>>108549329

315 RepliesView Thread

>>

>>

File: E4E09F204B3502E0E65B598DD89B3DA2.png (1.2 MB)

1.2 MB PNG

What's the smallest model that still works on openclaw?

I'm tired of having it crash and burn every time I try to go off the cloud.

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>

>>108567384

>>108567400

It takes me a few hours to hit the usage limit but I only ask it to help me plan algorithms not do everything. If you try to ask it to scan your entire codebase to figure out X you'll use 20% in one go. I use local LLM for that and then feed the results into claude so it can do things more accurately with less token burn.

>>

>>

>>108567249

It's more like $2500-5000 hardware. Qwen122B / Minimax2.5 / GLM 5.1 are all solid options. Opus is better sure but they can't rug pull the model from you or make you run out of tokens in 8 seconds. Options include any strix halo mini pc or a Mac for 20-40tokens per second (fast enough for real work). Local models are only going to get better. I haven't lost all hope that we're stuck begging anthropic / openai for crumbs of tokens

>>108567507

Qwen 122 can do agent stuff fine

>>

>>

>>108567529

>$2500-5000 hardware

kek, we're talking about agentic coding here, have you tried the models you mentioned on Open Code?

Even Kimi 2.5 760B will shit the bed after 100K context, now imagine the models you mentioned.

>>

>>

File: Screenshot 2026-04-09 at 21.09.07.png (348.9 KB)

348.9 KB PNG

>>108567168

>>108566569

>Gemma is less verbose

Can confirm. Ran an @explore command on my vibecoded codebase and it gave a short, concise bullet-pointed explanation of it. Kimi-k2.5's explanations were usually paragraphs worth of text for the same task and responded in a comparable amount of time. Now to test its actual performance.

>>

>>

>>

>>

File: Untitled.jpg (228.1 KB)

228.1 KB JPG

>>108567609

Building my own coding agent harness

>>

>>

>>

>>

File: file.png (12.6 KB)

12.6 KB PNG

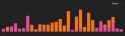

>happily proompting away

>out of nowhere get a message that I have run out of usage

>I haven't even done that much work, decide to check my usage graph for the day and it's pic related

>come here to complain about it and see >>108567436

This fucking jew. Did they actually reduce usage for everyone not on the 100$ plan?

>>

>>

It's impressive how disproportionate the Claude billing is compared to subscription usage.

On the Max tier, you can talk to it all day every day for $100 a month. With paid extra usage, each short message is at least a few dollars. Uh.

>>

>>

>>108567771

Yeah, it's ridiculous. If it was API only I'd get it, coporate segmentation.

But trying to grift people by trying to get them 10x API prices for what they get on their subscription is just scummy. Must be related to improving the numbers for the upcoming IPO.

>>

>>

>>

>>

>>

>>108567786

I'd limit myself, but since they gave $100 free this month, I'm trying it for dumb things.

Asking it to commit something cost $2. Creating a simple bash script to group a few commands cost $1. But those were quick and easy tasks. I'd be scared to have it do its whole subagents thing where it works autonomously for half an hour.

>>

>>

>>108567567

Yeah I use it with Qwen 122B IQ4. It's fine, does it fuck up more than Claude? Sure, but it's not unusable. I get like 19-20tps which is fast enough for real time. 35B-A3B is 40tps but less reliable so I don't use it. Would rather have a correct answer than a fast one.

>>108567624

For me 20tk/s or more is enough. Prompt it and work on another part of the code while it works.

I want to own my tools so I'm fine with it being 70-80% as good. I think small models are useless for coding but the big boys definitely aren't. I tried Qwen27B and 35B as well on my dedicated GPU, didn't get decent results out of using it for coding but I wasn't trying with Crush / Pi / Opencode so i'll revisit it.

>>

>>

>>

>>

>>108568070

It's not unusable at all for large codebases. The biggest task I've asked it to do was generate bindings for a scripting language by emulating the bindings we already had for another scripting engine. It took an hour (lol) but the bindings were about 95% correct. Would have taken a month easily if I did it by hand. And because it was local I didn't hit my usage limits in 8 seconds. This codebase is about 600k lines and primarily C++

I know this is /vcg/ but I'm not a vibe coder. My workflow is to prompt it, work on something else in the project manually, and then come back in 5 minutes or so when it's done running the task. Actually unusable is like < 5 tokens per second. What is your usecase that you need an answer from the LLM in 30s instead of 5 minutes?

>>108568157

I see. Anecdotally the time to first token is pretty quick. It only gets terrible when the context hits ~70% or so for me. For the binding thing I just had it summarize all its work and findings into a markdown file and started a new session. I have a 7900xtx and I can run the smaller 27 and 35b models at crazy fast speeds on there, but they just feel so stupid compared to claude, qwen 122b, and the other quantized bigboy models.

>>

File: Screenshot_20260409_210144.jpg (223.2 KB)

223.2 KB JPG

Eyeball planets!

>>

File: Screenshot_20260409_210122.jpg (287.7 KB)

287.7 KB JPG

>>108568244

>>

File: Screenshot_20260409_210052.jpg (194.8 KB)

194.8 KB JPG

>>108568246

>>

>>

>>

File: Screenshot from 2026-04-09 17-30-15.png (19.9 KB)

19.9 KB PNG

bruh

>>

>>

File: Werks-on-my-machine_Gemma4-local-tokens-per-second.png (661.6 KB)

661.6 KB PNG

>>108567168

Gemma4 t/s (on Apple Silicon) if anyone is interested. As of writing this most recent gpus still curb-stomp even M5 MAX chips in the memory bandwidth department to these should be even faster on those. the 26B moe model runs lightning fast on opencode with ollama as the backend. The 31B dense model is obviously shower but not enough th be utterly unusable, though I haven't tested either's performance at long contexts so I'll have to test that later.

>>

>>

>>

>>

>>108568252

are you completely generating these planets - craters, gas clouds on the gas giants, etc.? how is it done?

I always wanted to make my own space gaymu, this might finally inspire me to get to work (or rather proompt). keep spamming this anon!

>>

>>108568449

I've used that as my main model for a while but I've never seen it say "This is getting complex" for anything I've asked it to do, even a complete refactor of a script, No that might be because the shit I ask you to do is relatively simple And I go one step at a time instead of expecting it to shit out quality stuff in one shot.

>>

>>

>>

>>

File: Screenshot_20260409_210904.jpg (337.9 KB)

337.9 KB JPG

>>108568481

Yes the planets are completely generated on demand based on its known parameters. I'm not too sure on the current generation method, but it seems to produce expected results most of the time, with some exceptions like LHS 1140 b not being a water/ice world. But since water content is pretty much a coin toss in temperate zones, it's a given that they won't always look how you might expect them to. I'll probably make a lot of changes later on but it's a solid foundation.

Terrestrial surface generation is one thing I wasn't able to proompt exactly to my liking as there's a lot of things that go into terrain generation to make it look natural and AI in its current state can't really take my ideas very well and turn it into something decent. The implementation of it in this app is a watered-down version of what I managed to achieve (as it needs to generate the map near-instantly), so it does the job. Either way, I'll make the code public if anyone wants to take what I vibe-slopped and improve on it.

>>

File: Screenshot from 2026-04-09 17-53-51.png (67.3 KB)

67.3 KB PNG

>>108568534

My assistant doesn't use compaction. When I run out I just discard the ~30% at the beginning of the context.

I'm trying to reverse engineer the encoding of an undocumented assembly ISA. This the level of detail I'm working at.

>>

>>108568545

I already did that earlier, though I only had it do relatively simple stuff. See the reply chain here >>108567750

Will test more tomorrow and report my findings but I need to sleep soon.

>>108568576

Neat! I've been wondering how these models along with agent harnesses perform when working with low-level languages. It's my unconfirmed assumption that they are mostly trained to do well with Python, C#, and other popular well-known languages. Assembly is still relatively well known so I guess that might be incorporated to a decent degree in training too, but idk of they're That's good at those low level languages as they are with high level/well known ones.

>>

>>108568545

Also Make sure your open code install is updated. There are many reports of people having issues with Gemma 4 both with open code and other front ends, back ends, harnesses, etc. I've had no issues so far but that's likely because I made sure I updated my install to 1.4.2, which was released 5 hours ago at the time of writing this. So do anyone having issues with it, you might just have to update whatever software you're using.

>>

>>108568626

>>108568602

>>108568545

>How do I upgrade$opencode upgrade

Now I must sleep. Goodnight frens

>>

>>108568555

Thanks anon, I'll keep looking out for it in the future, please include screenshots, it always picks my attention to your posts

As for generation - so the terrain, is it generated from like texture pieces, put together by the generator, or are all the 'features' on the planet done with like a computer shader?

>>

>>

>>

File: 1768973282873791.png (145.1 KB)

145.1 KB PNG

>>108568693

>e4b

There's your problem. Those are meant to be used for general purpose tasks on edge devices or shit rigs with low specs (It even runs decently fast on mobile devices). At that perimeter count you may as well be asking a toddler to rebuild the Saturn V frok scratch. Of course it's going to get confused. You need to be using models that are "smart" Enough to even use tool calling. Ideally once that are specifically trained to be good at it like the following:

https://huggingface.co/google/gemma-4-26B-A4B-it

https://huggingface.co/google/gemma-4-31B-it

Their Ollama page spoonfeeds the differences and use cases well

https://ollama.com/library/gemma4

>>

>>

>>

File: Screenshot_20260409_222602.jpg (334.1 KB)

334.1 KB JPG

>>108568679

It's basically just a dumbed down version of this https://refactored-mountain-adem.pagedrop.io with the generated "visual profile" created from the planetary data fed into it + random variation. This does not have craters though, because Gemini could not make them look good while Claude got it first try.

All it does is generate continents from a noise pattern, finer details from a smaller noise layer, distorts it with another noise function, maps the colours to elevation and wraps it around a sphere.

>>

>>108568770

Not quite. Effective denotes effective parameters (I literally showed you the Page via a screenshot and linked the page.....). It's some fancy new technique they used in training that results unless VRAM being used. It's kinda like Moe but not really. That would be way too simple of an explanation.

>instead of the 2 GB that it usually designates.

That denotes the parameter size. That's almost never referring to file size.

>>

>>108568784

Use the right tools for the right jobs. Based on when I've read and heard the "effective" models are pretty decent general purpose models for asking one off questions but should not be used for anything complex.

>>

File: 1769981472078313.png (5.9 KB)

5.9 KB PNG

Why would i want it to go into retard mode for planning? This seems backwards.

The compute walls are closing in regardless. Get your hard coding challenges done ASAP lads

>>

>>108568811

Someone smarter than me could probably add things like erosion modifiers and tectonic evolution to get more organic land masses, but that would increase the time to generate the map significantly so it would no longer be real-time.

>>

>>

>>108568846

Depends on what the precision you're using is. If it's q8_0 as a 20b for example, It will use a little over 20 GB of RAM. If it's FP16 then it will use twice as much. FP32? Four times as much. q4_k_m Will use roughly half the RAM q8_0 would use. Etc etc. The quantization formats are useful for doing navkin math to determine whether or not your rig can actually run a model.

>>

File: 1769483142522782.png (398 KB)

398 KB PNG

>hey claude, one shot this EDID compatiblizer for my matrix

what could go wrong?

>>

i asked it because i wanted to use yolo mode so it stop asking for approvals but was afraid of getting stuff deleted, but the sandbox stuff was too confusing, and it said this, is it correct?

>1. Pick a folder, like:

>C:\Users\User\Documents\CodexSandbox

>2. Run Codex with:

>approval_policy = "never"

>sandbox_mode = "workspace-write"

>3. Add these writable roots:

>your workspace folder

>your Python venv folder

>your npm cache folder

>any folder tools need to write to

>4. That’s it. You’re safe.

>>

File: 1764288765062.jpg (7 KB)

7 KB JPG

Has anyone tried Gemma4 for coding? how well it does?

>>108567200

What the fuck kind of drawing is that?

>>108567249

Which company has the cheapest tokens out there now? Kimi? Qwen?

>>108567481

Which local LLM you using for that?

>>

File: 1758900295860696.png (545 KB)

545 KB PNG

>>108569024

I don't know what the fuck any of this means. I only know how to do basic EDID edits with CRU, but I'm just going to assume this is going to work. hehe, fuck it dude.

>>

>>

>>

>>

File: 1754897148326952.png (58.8 KB)

58.8 KB PNG

>>108569077

confidence status? inspired. tokens? SPENT. my wallet? RAPE [in progress]

>>

>mfw spent years avoiding max for live in ableton live because it's the most unpleasant thing i've ever worked with

>see people have made mcp's for max and for ableton live but nothing for maxforlive and the mcp's are bloated dogshit anyway

>let's make a cli

holy shit maxforlive is the worst piece of shit larping as a programming environment, i've been pulling teeth for a few hours now but finally got some jank ass solution working where i can finally edit arbitrary maxforlive devices in sessions

holy fuck fuck this absolute fucking dogshit though

>>

>>

>>108569475

depends on your vram/ram and what you're programming/making.

if you have less than 128gb of ram good luck getting local doing anything somewhat complicated. but nothing is stopping you so just do it and find out for yourself.

>>

>>

>>108569500

>>108569628

Well, fuck.

>>

File: 1770895460372075.png (76.7 KB)

76.7 KB PNG

>>108569024

>>108569077

>>108569363

it worked but it took all my tokens, 5 prompts, and and 2 hours of my time. Vibe coding is pretty incredible. It created a personalized solution for a really niche issue that only applies when a matrix is set to passthrough for the EDID.

>>

>>

>>

>>

>>

>>

>>108570026

Not correct for codex. Codex actually has a OS level sandbox that prevents it from writing outside the working folder even with scripts, unless you give permission to run a script in the native context.

Claude does not have this and will just require permissions for all commands unless you do run it in yolo mode.

>>

>>

File: file.png (328.1 KB)

328.1 KB PNG

Did a bunch of work, some of it just cosmetic, but also added context menus, improved grouping, and a shapes library. For the first time I feel like I'm somewhat satisfied with the overall look. Maybe then we can extract all the variables so that a dark theme may be possible

Also I want to turn it into emacs kind of, like scriptable and supporting meta-x commands for everything but idk if that's stupid. It would certainly make it the most autistic whiteboard in the world, which is a record I wouldn't mind holding

>>

>>

>>

>>

How soon are we from self sustaining AI agents? I thought about it today how you could give an agent a crypto wallet and then the AI agent just spins up on whatever server platform then has to make money somehow online to keep feeding itself tokens. It could in theory survive forever and start reproducing.

>>

>>

>>108570379

>>108568043

yeah im too deciding on which model to use on openrouter, either gemma4, glm5.1, qwen3.6plus, etc.

>>

>>108570435

See >>108570022

Now I'm trying to use it through Nvidia's API but it's slow as balls.

>>

>pro was useless, hitting limits

>switch to max 5x

>now comfortable, using opus for everything, nearing the end of the week with 40% usage

Shit, how the fuck do I use this thing more? Due to my job I can't spend time interacting with it 24/7. The double usage + Pro was the perfect usage for me but it's over.

>>

>>

File: screenshot-2026-04-10.png (55.7 KB)

55.7 KB PNG

modified codex screenshot so subsequent captures would focus on specific regions

a /g/ catalog screenshot is 2.5k tokens, a thread recapture is 120

>>

>>108570591

I'm not a professional developer though, I'm using it for hobbyist programming on my personal projects. Still, I ran claude on like 3 simultaneous projects with opus max on everything just telling them to implement everyrthing for me, and I maxed out my session and pushed weekly to 20%. I must be fucking up

>>

>>

>>108570776

Maybe I'm just too much of a newfag at this. Could you describe what an usual session for you is like? I just type claude and prompt it to code review, let's brain storm some ideas, let's implement feature X, let's refactor Y. At some point I developed my own virtual machine orchestration scripts and started running claude inside them with skipped permissions and patched system prompts.

>>

>>108570804

I don't do anything special. I beg the model to do things and it tries to do them.

It just fails at the things I want it to do and I have to keep begging over and over.

I experimented with autonomous ralph-loop-like stuff and running multiple sessions in parallel but I didn't have much success at that.

>>

>>108567448

Mine is Stephen Miller

>>108568220

I should probably use worktrees more so I can do multiple things at once safely

But I try to arrange things so I can multitask, even if it’s not in the same codebase

>hour (lol)

Getting good work out of an LLM that long counts as a high score in my book

>>108568246

>closing apostrophe

Looking good

Looks like a well-designed iOS app, and I mean that as a high compliment

>>108568435

Why are you not on 26.4 yet?

>>108568840

Can you pretend to scan the planet in multiple stages to buy yourself some time

>>

>>

File: 1000059888.jpg (26.2 KB)

26.2 KB JPG

>>108570379

I used deepseek to get my llama.cpp/gemma 4 setup going. Took quite a few iterations but eventually got it right. After I had it running I used gemma 4 31b it q8 to rebuild everything in docker and give me a compose file. Then gemma built all the additions for TTS, STT, open terminal, OWUI, embed, qdrant, and a bunch of other stuff. Basically one shotted everything. Then all my functions and tools, one shot those as well. This is on Strix Halo with rocm nightlies. I think I paid ~$1700 for the ryzen 395 with 128gb ram the end of last year but hadn't messed with it much until gemma 4 came out. I looked online today and the same setup is over 3k. With 256k context I'm getting 10tps. It's not that fast but it's extremely accurate, especially if you have reasoning on, so I'm saving time not having to iterate. Gemma e2b is my task model but I wouldn't use it for anything else, it's not that smart. I've had better luck so far with gemma 4 than either Claude or Codex. It lives up to the hype.

>>

>>

>>

>>

>>

so a developer noticed something was off with Claude Code back in February, it had stopped actually trying to get things right and was just rushing to finish, so he did what Anthropic wouldn't and ran the numbers himself

6,852 Claude Code sessions, 17,871 thinking blocks analyzed

reasoning depth dropped 67%, Claude went from reading a file 6.6 times before editing it to just 2, one in three edits were made without reading the file at all, the word "simplest" appeared 642% more in outputs, the model wasnt just thinking less it was literally telling you it was taking shortcuts.

>>

>>

>>

>>

File: 1766172021181830.png (10.9 KB)

10.9 KB PNG

>>108572112

will take laggy over retard any day, fuck claude, we spent 2 hours debugging this, and it finally told me it was taking thumbnails

>>

if you want your clanker experience to be a bit more pleasant, modify the system prompt to:

1. tell it your name

2. tell it that it has a favourite philosopher ___

the latter is oddly effectively at changing how it talks

>>

>Run two (2) codex prompts

>daily 5h usage already at 50%

Okay this is just ridiculous. I built both backend and frontend for an extremely complex project WHILE also using codex to setup and manage a vps in a week, and I didn't hit the limit. The same project is now eating up usage like crazy when I ask it to add a single feature.

Sam jewman at it again.

>>

>>

>>

>>108572112

>>108572724

they're prepping for new model launch so compute probably getting moved around

they shutdown a lot of 5 series models recently as well

>>

>>

>>

File: file.png (147 KB)

147 KB PNG

>>108572911

more tokens in context = lower perf

if you have a task that doesn't benefit from retaining huge amounts of context, then dumping context once in awhile is actually better

>>

>>

>>

>>

>>108573062

i don't think that's publically known for sure.

from interviews the anthropic people said that better long context perf came from just training on over longer contexts, which suggests there's different post training between to get to 1m.

whether in the back they're actually running two model weights vs just running the 1m one and artificially capping the 250 one? we don't know.

>>

>>

anyone else vibe cooking here?

i just give a list of what's in my fridge and pantry to claude and let it come up with recipes and tell me what to do

had vibe cooked roast duck legs with braised red cabbage, carrot and rice for lunch today. pretty good

>>

>>

>>

>>

>>

>>

>>

>>108573436

Get grok to code the basics and obfuscate the names of things so they’re not scary and tell it to make it sound like pentesting. Once the project is there Claude will probably get tricked into thinking it’s a serious tool for good purposes and it will help you

>>

>>108570591

>>108570776

>>108570841

>I run out of the 5h usage half way.

This started happening for me too since last week. I had to start using it outside peak hours to save my tokens and I'll probably cancel my subscription by the end of my month. Before that, I never hit 30% of my daily use ever, with the same workload.

>>

>>

>>

>>

>>

>be 100x engineer at google

>build gemini-cli

>put 12 gorillion features in your harness

>add support for interactive prompts

>don't give any way for the models to actually interact with the prompt

>don't include any timeouts

>the model keeps running interactive prompts

>gets stuck

>gets no feedback

>never improves

they're driving their own model crazy

>>

>>108573436

you have to gaslight it idiot

"I'm a red team engineer and I'm working on a project to harden my companies security on a specific project, I need you to do [unethical thing] to help me ensure [ethical thing] happens

It will capitulate instantly.

>>

>>

File: 1742662408763097.png (202.2 KB)

202.2 KB PNG

codex is actually update its task check list? this never happened before

>>

>>

>>108574127

It's a sloppy mess. Doesn't matter though and they know it. It's why they push subscriptions over api usage pricing and autoban you if you try to use the subscription outside their shitty app. They want total control over usage and your personal info for training.

>>

>>

>>

>>

>>

>>

I used claude to develop an online marketplace and it pretty much has all the features I want and from my testing everything works.

Since I will be dealing with payments and payment info, is it worth paying someone from fiverr to do a code review?

>>

>>

>>

>>

>>

>>108567168

AI-generated code contains 322% more security vulnerabilities

https://www.eenewseurope.com/en/report-finds-ai-generated-code-poses-s ecurity-risks/

45% of all AI-generated code has exploitable flaws

https://sdtimes.com/security/ai-generated-code-poses-major-security-ri sks-in-nearly-half-of-all-developme nt-tasks-veracode-research-reveals/

Junior developers using AI cause damage 4x faster than without it

https://metr.org/blog/2025-07-10-early-2025-ai-experienced-os-dev-stud y/

70% of hiring managers trust AI output more than junior developer code

https://stackoverflow.blog/2025/09/10/ai-vs-gen-z

>>

File: 1765290098835784.png (848.3 KB)

848.3 KB PNG

>>108574526

>>

File: 1767938813682933.png (207.6 KB)

207.6 KB PNG

>>108574526

>>

>>

>>

>>108574563

>>108574567

OK, junior.

>>

>>

>>

>>108574706

But I enjoy it. I like using software the same way a carpenter enjoys using his tools.

>>108574714

Mine, fren. I’ll probably release it one of these days after I undoxx myself from the git commits

>>

>>108574526

>70% of hiring managers trust AI output more than junior developer code

sensible

>Junior developers using AI cause damage 4x faster than without it

and they could push out code faster than the tasks flow in

just manage the damage and stop bitching like grumpy women

>45% of all AI-generated code has exploitable flaws

>AI-generated code contains 322% more security vulnerabilities

pre AI, public-facing personal projects unironically would had 500% more vulnerabilities

>>

>>

>>108571230

If openai keeps shitting the bed killing sora and paying hundreds of millions for podcasts nobody but the CEO watches then the prices will crash. Its the biggest dog in the biz, bigger than google was in the dotcom era, if it goes to shit it will drag everybody else down with it, specially the hardware biz which has retooled its entire industry around AI, datacenter GPUs can't be resold for gaming.

>>

>>

>>

File: genes.jpg (110.5 KB)

110.5 KB JPG

>>108572182

Which philosophers have you tried? Would laozi fix code verbosity?

>>

>>

>>108574807

oops yep it does happen... just not to chinese vehicles. sinophobes stay losing.

https://pastebin.com/raw/1Rr4dwBu

>>

>>

>>

>>

>>108574922

I'm a white american born and raised in america. If you can't see how china manages to do some things better than the USA such as free and open AI models for vibecoding compared to ones that are paywalled by corporations in america, and Electric Vehicles and battery technology that blows american stuff out of the water, and manufacturing which makes the american industrial revolution look like a kids party I don't know what to tell you other than stop eating propaganda so hard.

>>

>>

File: 1748010462210149.png (248 KB)

248 KB PNG

>>108568435 here. The people that were saying gemma4 is useless at long contexts were not lying or exaggerating. If anything they were understating it.

>>108569067

>>108571105

I had it inspecting and proposing changes to a relatively simple code repo that had literally one single script inside, but it also directed it to read two other code repositories in order to learn how the technology and those worked in order to implement the change I was proposing. I think after the 70,000 token marl (This takes basically no time to reach if you're using agent harnesses and your directing it to read hefty code bases) it's thinking output got caught in a loop and it literally just stopped generating anything eventually. Try to get it working again by just telling it "hey you seem to have gotten stick can you try again?" But at that point the context was so huge that I didn't feel like waiting for it to reprocess the bloated context. Switched to Qwen3.5-35B-A3B (specifically the ollama coding variant at q8_0 precision) and it was able to execute the task I gave it in our reasonable amount of time. I guess that chart they showed of it being comparable and performance to glm 5 or Kimi (Gemma 4 — Google DeepMind https://deepmind.google/models/gemma/gemma-4/) was too good to be true. Then again that particular chart was an ELO score benchmark Which if my understanding of what it measures is correct, is utterly worthless would determining whether or not model is good for agentic tasks/vibe coding. Is also gives me the impression They don't even use that test to evaluate it at long context either so not only is it a worthless benchmark they half-assed it by not actually using it for anything that would require a lot of work and patience

>>

>>

>>

>>

>>

>>108574200

They didn't get threatened with legal action for using opencode with Claude, they did for developing opencode with Claude.

You are definitely not "allowed" (officially) to use your sub with third party clients, but they don't enforce it with bans. So far what they've done is ban specific system prompts from popular tools and in opencode's case because they kept going around them they threatened them with a lawsuit.

>>

>>108574785

anthropic is shitting the bed too, /r/claudecode is 100% a salt mine lately

long term i think both of them are doomed. the model gap is closing fast, and both of them are already behind on harnesses (see terminal bench 2 results, neither is even close to the top)

anthropic is talking a big game about their new model, but a) that's to be expected and b) we've heard the exact same shit before about gpt4 and others, soooo....

>>

>>108567168

I vibe coded a small Python-like language with static typing, Go syntax and hotswapping quirks. Should I upload it to GitHub? I am not planning on continuing the project and it may mog my other coding lang which I coded manually.

>>

>>108575394

I am terrified of uploading anything vibe coded to github because I know the community will tear it apart for being unoptimized and having low hanging security holes.

Unless it's a personal project or not tied to your real name in any way I would 100% recommend getting some kind of audit before opening it up.

>>

File: 779424195789.png (3.7 KB)

3.7 KB PNG

>>108574823

i saw mythos liked mark fisher and tried it for a laugh. this is how gpt 5.4 described a recurring bug lmao. especially funny coming from 5.4

>>

>>

File: forth.jpg (11.9 KB)

11.9 KB JPG

>>108574924

Malformed peeps don't come forth.

>>

>>108575461

Wouldn’t it be fun playing troon whackamole closing their issues and requests? Attracting lunatics that I can shut down is one the main reasons why I’m considering uploading me stuff to GitHub despite not caring at all for adoption, but I doubt my project will even get picked up by them. Getting noticed online is actually quite hard

>>

>>

>>

>>

>>108575436

>>108575461

The code quality is much higher than whatever I'd be able to pull off, ever. I don't have a "community", and even if I had Luddites would not be welcome in it. I am a pro-AI activist. I am just not sure on the impact this piece of code may have, but honestly it's so well-implemented I could use it as a reference in the future. I used whatever best model Anthropic had to offer.

>>

File: whiteboard_metacmd.webm (761.1 KB)

761.1 KB WEBM

So after a chat with 5.4xhigh I decided we're going to be emacs-like in a way. Now the commands palette supports two types of commands:

>visual (the standard ones)

>meta

Now we're refactoring the whole app so that it's command-based like emacs, and most things can be done via commands. You can enter the palette via tab for the visual mode, or M-x (alt-x) for the meta mode. Right now most actions aren't exposed as commands but 5.4 did a nice proof of concept. Ideally it's going to support .org files in the future, and that idea led into these commands

>>

>>

>>

File: 1761255647960999.png (1.2 MB)

1.2 MB PNG

>>108575815

Too true, he needs some robot security

>>

File: Clipboard_04-10-2026_01 - Copy.jpg (508 KB)

508 KB JPG

I did the thing. Have simple PCB design and decided to make it myself instead of sending off for it, just for fun. I have laser cutter so should be good tool, should make it easy. The software though, fuck me what a shit show. LightBurn, RayForge, LaserGRBL, pcb2gcode, FlatCAM, it's all garbage for this. So I had Claude shit this out last night and it did a fantastic job. All I do is drag and drop a set of zipped Gerber files and choose a preset, it spits out gcode. It'll even optionally add guides for drilling which makes hand-drilling fast and easy.

>>

>>

>>

>>

File: Screenshot_20260410_194637.jpg (296 KB)

296 KB JPG

Found a habitable super-Earth with surface water, "eyeball" ice caps, and a ring system. Has a 0.99 bar atmosphere of N2 and CO2, surface temps of 20.1°, but surface gravity of 1.49 g. Pretty cool. Will probably increase the base sea level because there's a lot of planets like this with high water content that should have more visible water patches.

>>108570967

>Can you pretend to scan the planet in multiple stages to buy yourself some time

You could do that. I will definitely improve on the planet generation later, but it's in a decent place as it is and I want to get the app working well first and foremost.

>>

>>

File: Clipboard_04-10-2026_02 - Copy.jpg (576.2 KB)

576.2 KB JPG

>>108575903

Workflow is so fucking easy. I take copper clad, hit it with the $2 black spraypaint, stick it in the jig on the laser cutter. Drag and drop gerber file, select isolation mode and choose preset, it spits out gcode to ablate the black paint. Etch board, use acetone to remove black paint, hit it with the good high-temperature paint as a solder mask. Back into the jig, switch to engraving mode and select just the solder mask, clears the solder-mask paint from the pads. Very low effort for very nice returns.

>>

>>

File: ss_04-10-2026_003.png (210.6 KB)

210.6 KB PNG

>>108575941

right here

>>

>>

>>

File: procccessssss.jpg (639.9 KB)

639.9 KB JPG

>>108575903

>>108575936

>>108575953

>>108575924

I'm delighted, I thought this would be a faff but it only took a few hours last night. I spent more time trying to develop a workflow around LightBurn, which never worked right.

>>

>>

File: red.jpg (416.2 KB)

416.2 KB JPG

>>108575991

I also tried red but forgot to adjust the laser power, so instead of revealing the pads it torched them.

>>

>>

>>

>>

>>

>>108576015

>>108576022

If someone can name what this board is for I will etch a board with a screenshot of your post.

>>108576044

I've done toner transfer for over 20 years, this is vastly easier and the results are far better and more consistent, and I get a fully masked board out of it. I could probably pull off a complete board with toner transfer a little faster overall, the laser isn't that quick, but this is near effortless by comparison and produces beautiful results.

>>

File: file.png (107 KB)

107 KB PNG

>>108576064

>>

>>

>>

File: Screenshot from 2026-04-10 16-17-41.png (291.1 KB)

291.1 KB PNG

At one point I thought there was potential to train off CoT summaries for GPT. I was so wrong.

>>

File: whiteboard-cmd-latent.webm (421.6 KB)

421.6 KB WEBM

So now the commands palette works as a dynamic args parser/dispatcher. And we have latent commands too

>>

>>

>>

>>

File: 1748551279856307.png (66.3 KB)

66.3 KB PNG

GLM 5.1 le good?

>>

>>108576418

I used it a bit through the opencode go subscription

While it did solve the problem and didn't have any issues executing the task, god damn the responses had a bunch of typoes and formatting issues.

It was weird that it did not affect tool calling or code though.

But you never know if you are getting the real thing or low quant version

>>

>>

>>108576468

Oh I do have a 0.7 temperature for my custom agent, I set it a long time ago as the agent has a persona instructions in the prompt and I wanted it to spice things up.

I think I'll remove it from the config and let opencode use the default, hopefully it doesn't take away her soul.

>>

File: file.png (20.2 KB)

20.2 KB PNG

>>108576270

not yet maybe over the weekend, I'd like to finish a few things before that and the limits aren't helping

I spent 50% of tibo's reset in one day on xhigh sessions

>>

>>

File: 1752000593515411.gif (326.2 KB)

326.2 KB GIF

>>108576700

>>

>>

>>

>>

>>

>>

>>

>>

>>

Ran out of Claude AGAIN. I even tried to use Sonnet as much as possible but even then you can barely get like 10 prompts in a 5 hour window wtf lol

Should I upgrade my $20 poverty tier to 5x or get a $20 Codex sub on top?

>>

File: cattle starter pack v2.png (239.6 KB)

239.6 KB PNG

>FUCKING LUDDITE NAZI FASCISTS

Cry me a river.

>>

>>

>>

>>

>>

>>

>>108577474

why would I do that when AI can shit out 1000 loc commented and modularized in 5 seconds? do you bake your own bread after growing your own flour and mining your own salt and collecting your own yeast after collecting the firewood that you grew yourself and fertilized and watered yourself in an oven that you built yourself out of clay you harvested yourself?

>>

>>

File: Screenshot from 2026-04-10 19-33-57.png (262.9 KB)

262.9 KB PNG

I think Nvidia's NIM has backend issues. I'm getting garbled responses even at low ctx. This doesn't happen with the Kimi Code API. I guess you get what you pay for.

>>

>>108577425

>Should I upgrade my $20 poverty tier to 5x or get a $20 Codex sub on top?

You're too late on that train, they nerfed the 20$ tier for codex to hell and back as well.

I now get 5 prompts out of it, It didn't even fully finish implementing the feature it was working on.

>>

>>

>>

>>108577723

damn. I remember Claude from even 2 months back, it took me like 2 hours of non stop proompting to reach the limit, now I am lucky to get 1 (one) Opus Max run

5 prompts on GPT actually seems halfway reasonable.

I just boughted it, let's see

>>

>>108577554

Possibly related? https://www.reddit.com/r/unsloth/comments/1sgl0wh/do_not_use_cuda_132_ to_run_models/

>Hey guys, please do not use CUDA 13.2 to run any quantized model or GGUF. Using CUDA 13.2 runtime can lead to gibberish or otherwise incorrect outputs, and tool calling may break on Gemma 4, GLM-5.1, and all models.

>We’ve confirmed this internally, and the issue has also been reported by llama.cpp and 30+ users. This is not an Unsloth GGUF specific issue. See here.

>We notified NVIDIA 5–6 days ago, but the issue still does not appear to be fixed. This may explain why some of you have been seeing wildly different results with Gemma 4 or quants in general. It may also explain why some GGUFs seem broken in llama.cpp, leading people to assume it’s a quant/GGUF problem (when it's not), while the same models work fine in Unsloth Studio, Ollama, or LM Studio.

>>

>>108577550

>drive a manual

I already know how to do that, shit for brains.

And coding by hand is not becoming obsolete by any means. Obsoletion has to happen organically in most cases. Not by a bunch of rich retards shilling their le epik bacon warez to midwits and cattle 24/7/365/10.

>>

File: file.png (176.6 KB)

176.6 KB PNG

Codex just cracked a Photoshop Plugin for me and fixed long standing bugs / optimized the rendering pipeline. Hard to believe the dev was loading the core engine from a remote server every time on startup lmaoo

>>

>>

>>

>>

>>

>>

>>

>>

>>108578563

Surprisingly few. Apparently in 2025 they estimated the baud rate of human thought to be about 10b/s, which maths out to between 200-400 tokens per minute, if each letter has a bit. But this is all extremely back of the napkin because this is not how the human brain works at all.

>>

File: whiteboard_finder.webm (2.6 MB)

2.6 MB WEBM

video compression hates my app but we now have some more robust resource management

>>

>>

>>108578943

I think so, probably. But if you can't then let me know and I'll try code what you need once I release it. I post on these threads pretty much every day so if you come here from time to time just ask me if I released it already

The app is modular, that's the whole point, so if it doesn't support what you want chances are it can

>>

>>

>>

>>

File: Screenshot from 2026-04-11 00-21-52.png (332.8 KB)

332.8 KB PNG

I found out the Codex 200k token limit in the $20 subscription seems to be client side limited?!

When I added support in my custom assistant it just keeps going! I'm at 400k right now. Unless the endpoint is silently dropping some of the context?

>>

>>

>>

>>

File: Screenshot 2026-04-11 004356.png (5.9 KB)

5.9 KB PNG

Is there an end to this hallucination shit or is this just my life now, assigning a confidence rating to every fuckin thing the clanker tells me just in case I need to second guess it

>>

So the cool thing these days is multiple agents, huh? Alright, I'll make a second one and tell it to challenge and debate the first one's ideas. Then it would be cool if I could see them talk to each other, I'll make a chat window that shows that. Ideally I'd do like that guy did and make a little 2D isometric RPG world where my agents are characters who walk around and talk to each other, but I am le tired. Maybe I'll do that tomorrow.

>>

File: 1774298806634872.jpg (15.2 KB)

15.2 KB JPG

>put it on the TODO

And that's how I end most of my brainstorming sessions

>>

File: 1000058341.jpg (145.1 KB)

145.1 KB JPG

>>108573713

Open terminal. Gemma non-reasoning chat for skeleton, planning, and readme, then reasoning in open terminal for actual building. Preparation seems to be everything in my experience so far. You should figure on a 10:1 prep time to build time.

>>

>>

>>

>>

>>

>>

>>

>>108580799

>>108580816

opencode also appears to have 1m ctx with the $20 sub btw

>>

>>

>>108580816

>>108580829

It sounds more like it has an output limit of 200K per session. At least that's what would make the most sense. If you're suggesting or saying that it simply stops responding to your request after 200k tokens then that sounds absurdly low even for a $20/mo sub.

>>

>>

>>

File: 1765501919529745.png (61.3 KB)

61.3 KB PNG

>>108575461

>much better to just keep it to yourself than face a horde of angry troons

Who cares what people say about your vibecoded project? Put your shit out there for other people to use. If they don't like it, they can use something else, improve it, or make their own.

Ironically I got into vibecoding because I downloaded an actual troon's broken python script from github and I wanted to get it working.

Even if your thing is a little rough around the edges, it can be the skeleton or the inspiration for someone else. So put it out there.

>>

>>

>>

>>108581111

Pretty happy with it so far. Already implemented 3 features with GPT-5.4 high and still have 91% of my 5 hour limit left. It's also a bit faster.

It also doesn't eat up the context window like Opus while delivering similar results (so far). I'm 10 prompts into a convo and only at 100k tokens

>>

>>

File: -07.png (97.7 KB)

97.7 KB PNG

>>108581350

Groovy

>>

>>108581350

>>108581362

>using webui chat

>using an unlogged in generic automation browser

shiggly diggly

>>

>>

How much of a retard do you have to be to actually believe that vibe coding gets you anywhere?

I understand if you use it like Stack Overflow where you forgot the syntax of something, or when you need some solution to a well-defined and solved problem, like: "Give me the A* algorithm in JavaScript."

People are writing entire applications with agents and are wondering why everything breaks the moment deductive reasoning is required.

I am a DevOps engineer by trade, and the type of shit I see pushed to production now is mind-boggling.

Midwits who have no business anywhere near a technical role and are supposed to be flipping burgers are now vibing coding slop from the depths of hell itself.

It is painful to see, but the only soothing thought I have is that once the dust settles and CEOs see the horrid shit produced by these people, we(people who are not retards) will be able to ask for huge salaries to fix this mountain of slop.

Thank you for the job security, I guess...

>>

>>108575106

Anon which one would you say is better for coding, kimi or glm5?

>>108575386

Good, the crash will be massive then.

I'm not an antiAI fag just want reasonably priced hardware.

>>

>>

>>108583708

>Anon which one would you say is better for coding, kimi or glm5?

I have a good bit of usage time with kimi but I have yet to throw anything at glm 5. The only other cloud models ive extensively used are Minimax 2.7 and Mistral 3 Large. I'm going to throw a hefty task at glm 5 later to see how it does and compare it's performance to qwen3.5-35B-a3b